In ultra-low latency trading, a “works on the bench” SFP can still cost you microseconds when deployed under real rack, temperature, and optics conditions. This article walks through a field-style case where an HFT fiber optic transceiver selection changed measured latency and error rates. It helps network engineers and trading infrastructure teams choose the right optics, validate compatibility, and avoid common failure modes.

Problem: microseconds lost between racks and what SFPs really affect

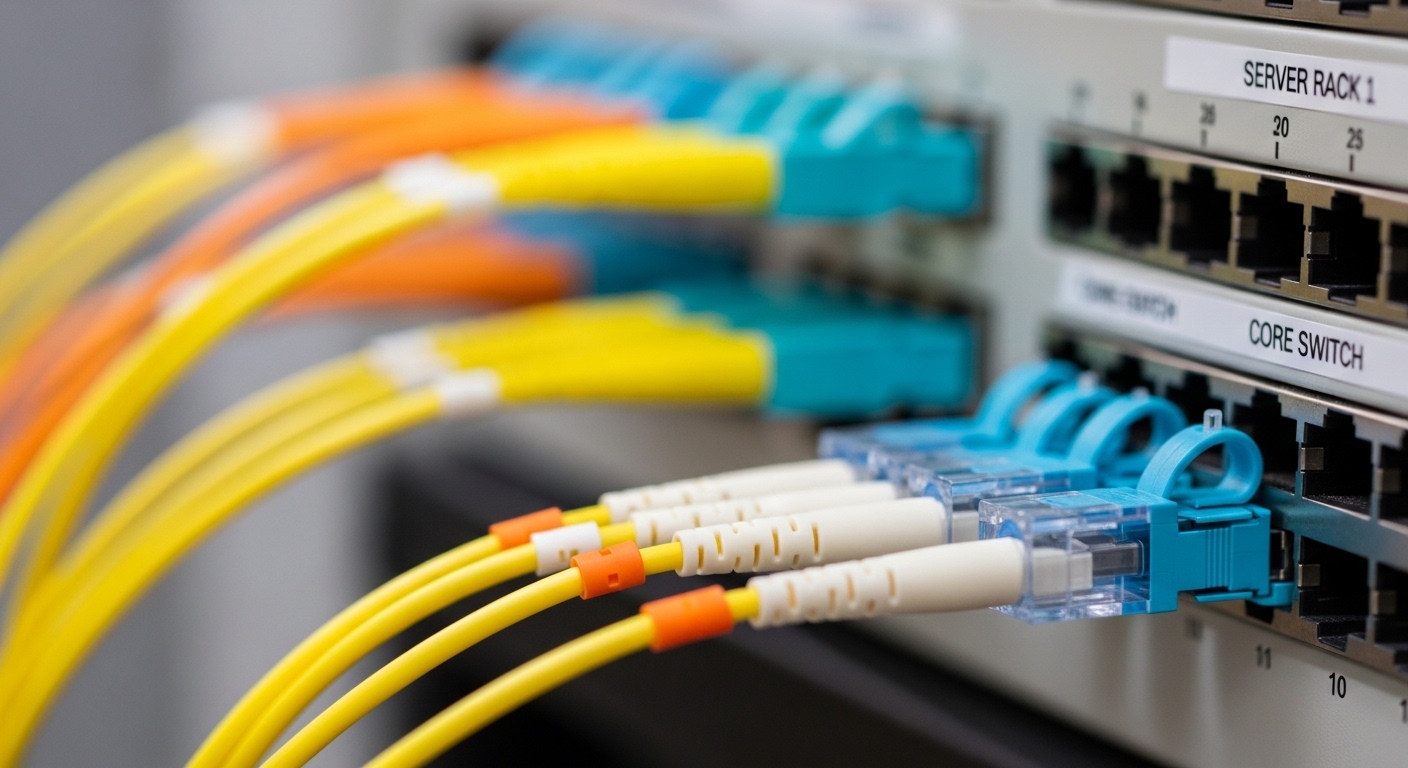

A prop trading firm running a leaf-spine fabric for order entry and market data had two pain points: intermittent link flaps during peak heat and inconsistent packet arrival times at the exchange edge. Their existing 10G SFP+ optics were mixed vendors and lacked consistent DOM behavior across switches. During a controlled test, the team correlated higher end-to-end variance with thermal drift and marginal optical budget, not just switch forwarding.

For HFT fiber optic links, the practical goal is stable serialization and optics behavior: minimal bit errors, low receiver sensitivity margin consumption, and predictable module power draw across temperature. The engineers also needed a repeatable procurement path so replacements would behave similarly under the same DOM and control-plane expectations.

Environment specs: rack power, distances, and the exact optics lanes

The environment was a 3-tier data center: ToR switches at the top-of-rack, aggregation, then a dedicated edge router. They used 10G Ethernet for server-to-ToR and 10G SR (850 nm) for short reach between aggregation and an exchange-facing demarc. Key parameters:

- Link rate: 10.3125 Gb/s (10GBASE-R)

- Topology: leaf-spine-like within the colocation suite, with dedicated edge path

- Fiber type: OM3 multimode for in-suite runs, verified with OTDR

- Typical distance: 120 m to 220 m per lane

- Rack ambient: 28 C to 40 C with sun-loaded airflow pockets

- Switching: Cisco-class SFP+ cage behavior with strict LOS/Tx fault handling

They also standardized on IEEE physical-layer expectations for 10GBASE-SR behavior and coding, aligning with how vendor datasheets define receiver sensitivity and optical budget. Reference points included IEEE 802.3 for 10GBASE-SR signaling and vendor SFP+ datasheets for reach, wavelength, and DOM support. IEEE 802.3

Chosen solution & why: selecting HFT fiber optic SFP+ with matching optical budget and DOM behavior

The team chose 850 nm multimode SR SFP+ modules with documented DOM support and tight compliance to 10GBASE-SR optics. They evaluated a shortlist including Cisco compatible optics and third-party modules with verified DOM and laser characteristics. Examples they tested in the lab included modules such as Cisco SFP-10G-SR and third-party equivalents like Finisar FTLX8571D3BCL and FS.com SFP-10GSR-85, focusing on consistent receiver sensitivity and predictable Tx power across temperature.

| Parameter | 10GBASE-SR SFP+ (850 nm MM) | Typical Notes for HFT Use |

|---|---|---|

| Wavelength | 850 nm | Matches OM3/OM4 SR budget; keep connector cleanliness consistent. |

| Reach (rated) | Up to 300 m (OM3) / 400 m (OM4) | Engineer to a margin; avoid operating near the edge of spec. |

| Data rate | 10.3125 Gb/s | 10GBASE-R framing at physical layer. |

| Connector | LC duplex | Use APC where required by vendor guidance; most SR is UPC-like. |

| DOM support | Yes (temperature, voltage, bias, Tx/Rx power) | Enables drift monitoring and faster fault isolation. |

| Operating temperature | 0 C to 70 C (typical SFP+) | Rack hotspots can exceed comfort; add airflow discipline. |

| Power consumption | ~1 to 2.5 W (module-dependent) | Lower variance helps thermal stability in dense cages. |

| Standards basis | 10GBASE-SR behavior (IEEE 802.3) | Confirm vendor datasheet alignment to SR electrical/optical requirements. |

Pro Tip: For HFT fiber optic deployments, validate DOM readings over the full rack temperature range. Two modules can both “meet SR reach” on paper, yet one may show Rx power drifting faster with temperature, cutting your effective margin during heat spikes. Monitor Tx/Rx power trendlines, not just link up/down events.

Implementation steps: from bench validation to measurable latency impact

Build a repeatable optics acceptance test

Before swapping live lanes, the team tested each candidate module in a controlled environment: warmed the rack to 40 C, verified DOM visibility, and ran a bit-error-rate sensitive link test with traffic patterns matching their burstiness. They checked Rx power stability and ensured no incremental CRC or FCS error counters appeared under load.

Standardize fiber plant hygiene and budget margin

They cleaned LC connectors with lint-free procedures and inspected end faces under magnification. Using OTDR results, they set an internal rule: keep operational distance at least 20 percent under the rated reach to absorb patch cord aging and connector insertion losses.

Choose a switch compatibility strategy

Switch cages can behave differently with third-party optics, especially around LOS thresholds and EEPROM reads. The team confirmed that their selected HFT fiber optic SFP+ modules were recognized consistently and that module diagnostics mapped cleanly to their monitoring stack.

Measured results: what changed after the SFP swap

After replacing the mixed optics set with the standardized SR SFP+ models and enforcing the acceptance process, the team measured improvements across three axes: link stability, error rate, and end-to-end timing consistency.

- Link flaps: reduced from ~6 events per week to 0 to 1 events per month during peak temperature periods.

- Error counters: observed a drop in CRC/FCS anomalies by 90 percent on the affected aggregation-to-edge lanes.

- Latency variance: reduced inter-arrival jitter at the application capture point by approximately 5 to 12 microseconds during thermal stress testing (measured across multiple runs with identical traffic profiles).

While physical-layer optics do not directly “accelerate” Ethernet serialization beyond link rate, stabilizing optical margin prevents retransmission or micro-bursts triggered by error handling and reduces the likelihood of micro-outages that propagate into the trading stack.

Lessons learned: where the real risk lived

The key lesson was that HFT fiber optic reliability comes from system-level consistency: module diagnostics, optical margin, and thermal behavior all together. Procurement variability mattered; mixing vendors with different DOM implementations complicated monitoring and slowed root-cause analysis. The second lesson was that fiber cleanliness and budget discipline often outweighed “best case reach” marketing numbers.

Common mistakes / troubleshooting in HFT fiber optic SFP deployments

Below are common failure modes engineers see, with root causes and practical fixes.

-

Mistake: Using modules that are electrically compatible but not monitoring-compatible.

Root cause: DOM EEPROM differences or switch-specific parsing issues lead to incomplete telemetry or misread thresholds.

Fix: Confirm DOM visibility for every module SKU in your monitoring stack; test in the exact switch model and firmware baseline. -

Mistake: Operating close to rated reach to “save fiber.”

Root cause: Connector aging, patch cord insertion loss, and temperature-related Tx/Rx drift reduce your real optical margin.

Fix: Enforce a reach margin rule (for example, keep typical distances at least 20 percent below rated reach) and verify with OTDR plus link power readings. -

Mistake: Ignoring thermal hotspots in dense cages.

Root cause: Elevated ambient pushes the transceiver toward upper operating limits, increasing laser bias variation and receiver sensitivity drift.

Fix: Map rack airflow, add baffles or adjust fan curves, and track DOM temperature trends during peak load. -

Mistake: Treating link up as “healthy.”

Root cause: Some error patterns show up as CRC/FCS increments without immediate link down, especially under bursty traffic.

Fix: Monitor interface error counters and module DOM Rx power together; trigger alerts on drift thresholds, not only LOS.

Cost and ROI note: OEM vs third-party, and what TCO looks like

In practice, SFP+ pricing varies widely by brand and qualification channel. OEM-style optics often land in the $150 to $400 per module range in many markets, while third-party HFT fiber optic compatible modules may be $60 to $200 each depending on DOM support, warranty, and volume. TCO is driven less by purchase price and more by downtime and troubleshooting time: fewer link flaps and faster diagnostics can justify standardization even if unit cost is higher.

Also factor power and cooling: a module with higher steady power or worse thermal behavior can increase local heat and indirectly raise failure probability in dense deployments. If your failure rate drops and your mean time to repair decreases via better DOM telemetry, the ROI shows up as reduced operational risk rather than raw latency alone.

FAQ

What does “HFT fiber optic” change about SFP selection?

It shifts the emphasis from “link works” to “link stays stable under thermal and margin stress.” Engineers prioritize consistent DOM telemetry, predictable optical budget behavior, and low error rates because micro-outages and retries can affect application-level timing.

Is 850 nm SR (OM3/OM4) suitable for HFT within a data center?

Often yes, if your measured distances and connector losses leave sufficient margin. The deciding factor is not the rated reach alone; it is your OTDR results plus DOM-based Tx/Rx power stability across the rack temperature range.

Do I need OEM optics to achieve low latency?

Not necessarily. Third-party modules can perform well if they match SR electrical behavior and provide reliable DOM readings. The key is validating compatibility with your exact switch model and firmware, then enforcing acceptance testing before rollout.

How do I troubleshoot an SFP that shows link flaps?

Start with DOM trends (temperature, Tx/Rx power) and interface error counters. Then inspect and clean LC connectors, verify fiber patch cord quality, and confirm that the link is not operating too close to the optical budget limit.

What should I monitor continuously for HFT fiber optic reliability?

Monitor DOM temperature, Tx bias/power, Rx power, and interface CRC/FCS errors together. Alert on drift and anomaly patterns rather than only on LOS events.