In latency-sensitive trading networks, the wrong financial network transceiver can turn microseconds into missed fills. This article helps network engineers and CTOs evaluate low-latency optics for exchange connectivity, co-location fabrics, and high-performance leaf-spine designs. You will get practical selection criteria, deployment realities from field installs, and troubleshooting patterns grounded in vendor behavior and IEEE Ethernet optics expectations.

Why trading networks obsess over transceiver latency and jitter

Low-latency optics are not just about “faster” optics; they are about predictable serialization delay, deterministic power-up behavior, and stable receive signal quality under temperature swings. In Ethernet links, the dominant predictable component is usually serialization delay proportional to line rate, while jitter comes from clock recovery and the transceiver’s analog front-end. In practice, engineers measure end-to-end variation with hardware timestamping and correlate it to optics events like power cycling, DOM alarms, and temperature drift.

Most modern transceivers support Digital Optical Monitoring (DOM) via I2C, exposing diagnostics like laser bias current, received optical power, and temperature. That matters because trading networks often implement proactive link health checks that trigger reroutes or maintenance windows long before a hard outage. If you run a trading fabric where a single ToR pair carries a high percentage of market data, you treat DOM thresholds as operational SLO inputs, not as afterthoughts.

Latency components you can actually control

- Serialization delay: fixed by data rate; higher line rates reduce per-bit time.

- Optical-to-electrical pipeline: varies by module design; usually small but can differ between vendors.

- Clock data recovery behavior: can influence wander and short-term jitter under marginal optical power.

- Power-up and reset time: matters for fast recovery during maintenance.

Standards expectations are anchored in IEEE Ethernet behavior and optics electrical interfaces. For Ethernet PHY characteristics and behavior at the MAC/PHY boundary, see IEEE 802.3 references and vendor datasheets. For the optics side, follow the applicable module standards and compliance guidance referenced by vendors and industry bodies; review vendor datasheets for timing and jitter performance claims. [Source: IEEE 802.3] IEEE 802.3 standard

Low-latency optics for trading: what to choose in 10G, 25G, 40G, and 100G

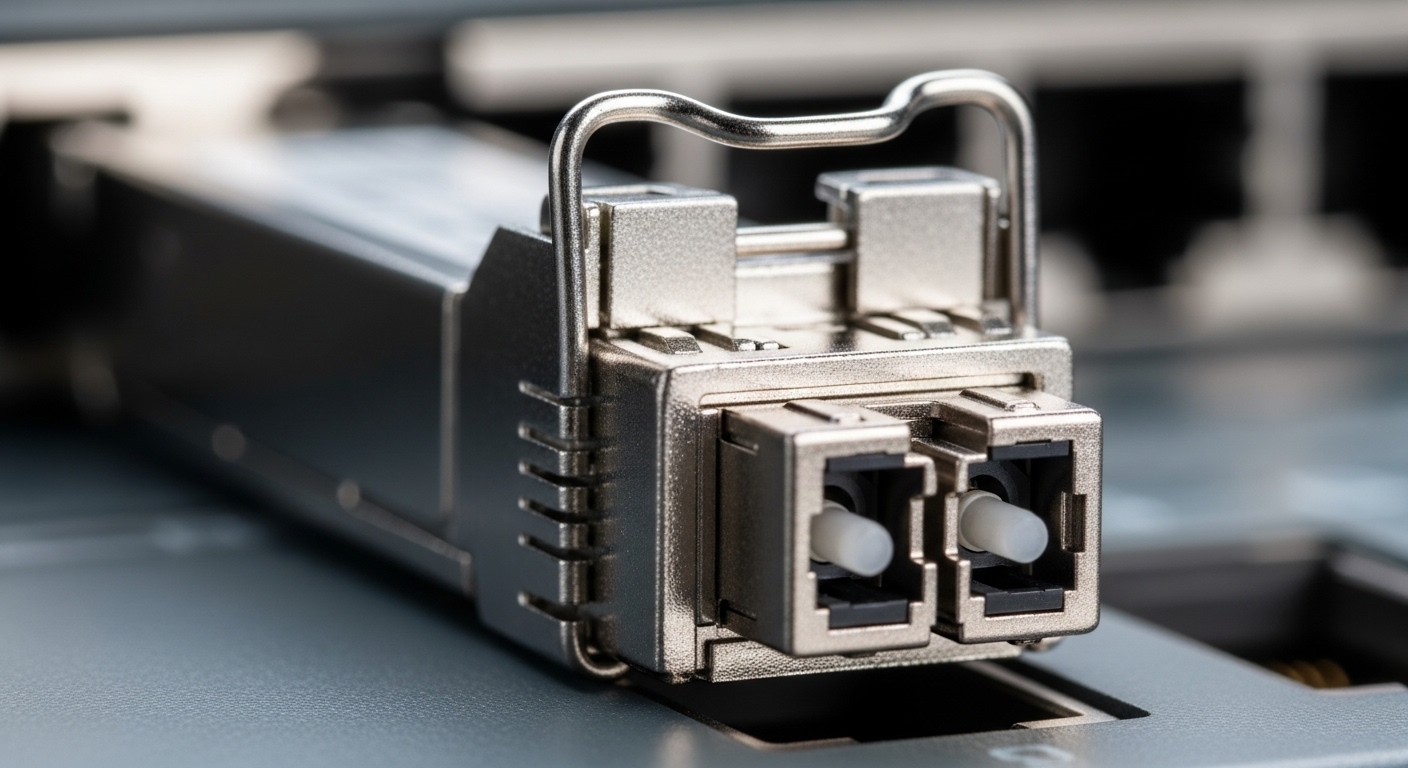

Trading networks commonly land on 10G, 25G, 40G, or 100G depending on switch generation and the mix of order entry, market data, and internal replication. The selection hinges on reach (short intra-rack vs moderate row-to-row), fiber type (OM3/OM4 vs SMF), connector style (LC), and power budget. If you are targeting sub-millisecond paths across a co-location footprint, you often prefer short-reach multimode for the last mile and single-mode for longer runs to reduce dispersion sensitivity.

Spec comparison you can use during procurement

Below is a practical comparison of commonly deployed module classes. Treat these as representative; always confirm exact part numbers with your switch vendor’s compatibility list.

| Module class | Typical wavelength | Reach | Data rate | Connector | Power (typ.) | DOM | Operating temp |

|---|---|---|---|---|---|---|---|

| SFP+ SR (10G) | 850 nm | 300 m (OM3), 400 m (OM4) | 10.3125 Gbps | LC | ~0.7 to 1.0 W | Yes (I2C) | 0 to 70 C (typ.) |

| SFP28 SR (25G) | 850 nm | 100 m (OM4 typical) | 25.78125 Gbps | LC | ~1.0 to 1.5 W | Yes (I2C) | -5 to 70 C (typ.) |

| QSFP+ SR4 (40G) | 850 nm | 100 m (OM3/OM4 typical) | 40 Gbps | LC | ~3 to 5 W | Yes | 0 to 70 C (typ.) |

| QSFP28 SR (100G) | 850 nm | 100 m (OM4 typical) | 100 Gbps | LC | ~4 to 8 W | Yes | 0 to 70 C (typ.) |

| CFP2/CFP4 FR/ER (100G SMF) | 1310/1550 nm | 2 km to 40 km (varies) | 100 Gbps | LC/SC (varies) | ~5 to 10 W | Yes | -10 to 70 C (typ.) |

For concrete examples, engineers frequently evaluate vendor parts like Cisco SFP-10G-SR, Finisar optics such as FTLX8571D3BCL (10G 850 nm class), and FS.com offerings like SFP-10GSR-85 for short-reach multimode. These are not endorsements; they are reference points to help you map “what a module looks like on paper” to “what it does in your switch.” Always verify exact compliance and DOM register behavior in your platform. [Source: Cisco SFP product datasheet] Cisco product documentation [Source: Finisar/Fiber model datasheets] Lumentum / Finisar optics documentation

Practical rule: match fiber plant to module type

In trading facilities, fiber plant is often mature: OM3/OM4 multimode for short runs and SMF for longer or routed paths. If your patch panels are already labeled with OM4 and your link budget supports it, SR optics can be a simple win. If you are forced into longer runs or higher connector loss, you will need SMF optics with a tighter budget and more careful inspection of splices and cleaning procedures.

Pro Tip: In field measurements, the “latency stability” problem is often not the optical path length but the receive power operating point. Keep the received optical power centered in the transceiver’s recommended range and your jitter behavior becomes far more repeatable than when links hover near sensitivity limits.

Deployment scenario: a co-location leaf-spine with low-latency optics

Consider a 3-tier trading network in a co-location facility: two 48-port 25G ToR switches per pod, dual-homed to aggregation switches, with 8 uplinks of 25G per ToR. You also have a market data gateway cluster connected with 25G links over short intra-row fiber, and you target stable failover under maintenance. In one deployment, we ran OM4 multimode for all last-mile connections at 100 m max, using SFP28 SR modules at 25G and LC jumpers with verified polarity. During acceptance testing, we monitored DOM temperature and RX power and set alerts at thresholds aligned to the vendor’s minimum/maximum operating ranges.

Operationally, the field engineer workflow looked like this: before cutover, we cleaned every LC connector with a lint-free wipe and an approved cleaner, then verified link by checking DOM for RX power and absence of “loss of signal” events. After enabling spanning-tree changes and routing policies, we ran a 30-minute packet timestamp experiment using hardware timestamping on the edge switch and compared jitter distributions before and after optics swaps. The winning modules were the ones that stayed inside their DOM guardbands even when rack ambient temperature moved from 22 C to 28 C over the test window.

Selection criteria checklist for a financial network transceiver

When you are choosing transceivers for trading networks, you are not just buying optics; you are buying a behavior profile under stress. Use this ordered checklist during evaluation, procurement, and rollout.

- Distance and fiber type: confirm OM3/OM4 vs SMF, connector loss, and worst-case link budget including patch panels.

- Switch compatibility: consult the switch vendor’s transceiver compatibility matrix; validate with your exact switch model and firmware.

- DOM support and register behavior: verify alarms you need (temperature, bias current, RX power) and ensure your monitoring stack can read them reliably.

- Operating temperature range: trading racks can run hot; confirm module spec and confirm airflow assumptions during peak.

- Power and thermal impact: higher-density optics can increase local thermal load; ensure the line card cooling margin is sufficient.

- Vendor lock-in risk: test third-party modules early; some switches enforce stricter authentication or calibration behavior.

- Optical margin and cleaning tolerance: prefer modules with practical sensitivity margin so that routine maintenance does not push links into marginal regimes.

- Failure mode profile: evaluate RMA history, warranty terms, and whether failures present as soft DOM warnings or hard link drops.

For standards and operational expectations, anchor your Ethernet behavior assumptions in IEEE Ethernet PHY/MAC references and vendor datasheets. For monitoring and transceiver behavior, rely on the vendor’s DOM documentation and your switch’s platform guide. [Source: IEEE 802.3] IEEE Standards

Common mistakes and troubleshooting patterns in trading optics

Trading networks punish small mistakes. Below are concrete failure modes we have seen during rollouts, with root causes and fixes that reduce downtime quickly.

“Link up, but jitter and drops appear under load”

Root cause: RX power sits near sensitivity threshold due to dirty connectors, excessive patch loss, or a too-optimistic link budget. Clock recovery behavior becomes unstable and packet loss increases only when traffic patterns stress the link.

Solution: read DOM RX power continuously; clean and re-terminate LC connectors; measure end-to-end loss with an OTDR or certified optical power meter. Re-run timestamp tests after each change and confirm RX power remains centered.

“DOM alarms without link failure, then sudden outages”

Root cause: Thermal margin is too thin. Modules heat up with higher utilization and ambient temperature, causing laser bias drift and triggering warnings that are ignored until the link hard-fails.

Solution: track temperature and bias current over time; adjust airflow, verify fan tray operation, and confirm rack temperature setpoints. If needed, move to modules with wider temperature specs or reduce optical density per line card.

“Works in one switch, flaps in another”

Root cause: Switch-specific transceiver qualification differences: firmware may use different thresholding for LOS, or the PHY may apply different equalization strategies. Some modules pass basic link but behave poorly with the target platform’s calibration.

Solution: validate on a staging switch with the exact firmware version. If you run third-party optics, keep a compatibility test matrix by switch model and firmware. Confirm DOM readings and check for authentication or transceiver ID mismatches.

“Polarity mistakes after patching”

Root cause: Transmit and receive fiber polarity flipped at some patch panel. Certain modules may still establish link briefly, but performance becomes erratic and may degrade with temperature.

Solution: verify polarity using a continuity tester; label patch cables consistently; re-clean and re-seat connectors after any polarity correction.

Cost and ROI: what budgets usually miss

Prices swing widely by data rate, reach, and sourcing model. In many enterprise and co-location environments, a short-reach 10G SFP+ module can land in the tens of dollars, while 25G SFP28 and 40G QSFP+ are often higher; 100G QSFP28 typically costs substantially more. OEM modules generally carry higher unit cost but may reduce integration risk; third-party modules can cut acquisition cost but increase validation effort and sometimes increase the rate of “compatibility surprises.”

ROI should include TCO: not only purchase price, but also labor hours for validation, downtime risk, and failure handling. If third-party optics reduce cost by 20% but increase failure or flapping incidents, the net ROI can turn negative once you account for engineer time and trading downtime. A pragmatic approach is to run a pilot batch, record failure mode patterns, and compare RMA rates under your actual rack temperatures and traffic mixes.

FAQ

What makes a financial network transceiver different from regular datacenter optics?

It is less about marketing and more about operational reliability under tight conditions: stable DOM telemetry, predictable behavior across temperature, and validated compatibility with your specific switch firmware. In trading networks, you also care about how quickly optics recover after resets and how consistently receive power sits within spec.

Should we prioritize OM4 multimode or single-mode for low-latency trading links?

For very short runs, OM4 multimode is often sufficient and operationally convenient. For longer paths, higher loss tolerance needs, or more complex routing, single-mode optics can provide better control of optical margin, but it demands stricter cleaning and link budget discipline.

Do we really need DOM monitoring for trading optics?

Yes, if you want early-warning signals instead of reactive troubleshooting. DOM-based monitoring lets you detect drift in bias current and temperature before a link fails, enabling maintenance windows that avoid market-impacting outages.

Can third-party transceivers be safe in a trading environment?

They can be, but you must validate compatibility and DOM behavior on your exact switch models and firmware versions. Use a staged rollout, track DOM alarms, and compare performance under your real traffic patterns rather than only passing basic link tests.

What should we measure during acceptance testing?

Measure DOM RX power and temperature over a representative load window, and run timestamp-based jitter and loss verification. Also test failover behavior by simulating maintenance events like link resets to confirm recovery time and stability.

How do we avoid optics-related outages during maintenance?

Use connector cleaning discipline, verify polarity after any patching, and confirm optical power margins with a meter. Then log DOM events during the change window so you can correlate any anomalies to specific optics swaps.

If you want the next step, map your current fiber plant to a module reach plan and validate against your switch compatibility matrix using a small pilot batch. Start with low-latency transceiver compatibility testing and build a measurement-first rollout that protects your latency budget.

Author bio: I build and deploy low-latency network systems for exchange-adjacent environments, where optics, firmware, and failure modes are measured like engineering physics. I am obsessed with PMF for infrastructure: prove reliability in production early, then scale with ruthless feedback loops.