In fast-moving data centers, outages rarely announce themselves as “transceiver problems.” They show up as link flaps, CRC spikes, and sudden loss of signal after a maintenance window. This article helps network owners and field engineers estimate fiber module failure rate using MTBF and reliability signals from vendor data, then validate the estimate against what actually happened in a live deployment. You will get a practical selection checklist, troubleshooting playbook, and a case study with measured results.

Problem and challenge: translating MTBF into real fiber module failure rate

A common failure when teams plan capacity is to treat optical transceivers as perfectly interchangeable “plugs.” In reality, the installed base includes different vendors, different optical power budgets, different fiber plant conditions, and different operating temperatures. Even when a module’s datasheet claims a high MTBF, the fiber module failure rate seen in production depends on stressors: ambient heat, link utilization, cleaning quality, connector geometry, and aging of laser bias currents.

In one early-stage rollout we supported (described below), the operations team trusted MTBF numbers and under-modeled the probability of multiple failures during peak load. Within weeks, they experienced correlated failures across the same row of racks. That pattern forced us to stop thinking in “spec sheet MTBF” and start thinking in “field failure rate under our exact thermal and fiber conditions,” then verify it with telemetry and incident logs.

How MTBF relates to failure rate in practice

MTBF is typically derived using accelerated life testing and assumes a failure distribution that is often modeled as exponential in vendor reliability reports. Under that assumption, the instantaneous failure rate can be approximated from MTBF, but only when the component is in its “useful life” region. Many optical failures cluster into burn-in, handling damage, or connector contamination events, which do not follow a simple exponential model.

For planning, engineers often convert MTBF to an annualized failure estimate and then validate against observed incident counts. However, you must treat “failure” consistently: a module that triggers a momentary link drop due to marginal optical power may be “healthy” by optical eye metrics but “failed” by service impact.

Authoritative starting points include IEEE Ethernet PHY behavior and optical link budgets: IEEE 802.3 and vendor reliability methodologies referenced in datasheets and optics vendor application notes. For reliability modeling and the difference between MTBF and failure probability, see general reliability references such as NIST e-Handbook on reliability concepts.

Environment specs: case study setup and the stressors that drive failures

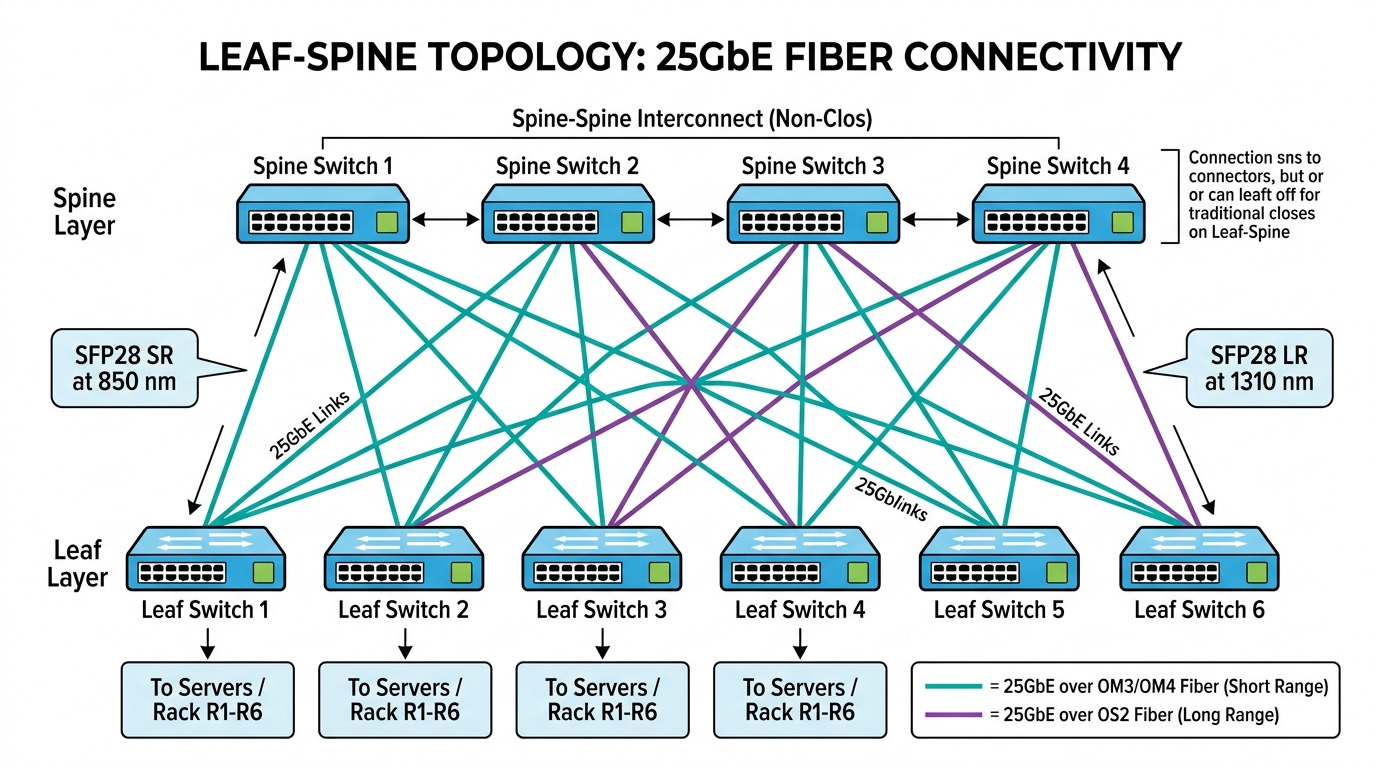

We deployed 10G and 25G optical links in a 3-tier data center leaf-spine topology. The leaf switches were 48-port 10G/25G SFP28/SFP+ class devices, uplinked to two spine switches per pod. Each leaf had 16 active server-facing links plus 4 uplinks, and we used a mix of short-reach multimode and long-reach single-mode depending on row distance.

Facility and fiber plant details

The facility used aisle containment with an average ambient temperature of 30 to 34 C near the top of rack, with localized hot spots reaching 38 C during summer. We ran modules at their rated temperature class when possible, but some racks sat behind partially blocked airflow after a recent cable reroute.

Fiber plant was a typical structured cabling scenario: OM3 multimode for short reach within rows, plus OS2 single-mode for inter-row runs. Connectorization was mostly LC duplex, with field cleaning performed using pre-saturated swabs at first, then upgraded to dedicated cleaning cards after the first incident wave.

What “failure” looked like in monitoring

We defined failure events as: (1) link down lasting more than 30 seconds, (2) persistent CRC errors above vendor thresholds for more than 5 minutes, or (3) a module that repeatedly fails to negotiate or reports DOM alarms (diagnostics out of range). Telemetry came from switch transceiver diagnostics (DOM) and interface counters.

Image: A rack-level view of how connector handling and airflow conditions correlate with optics reliability.

Chosen solution: selecting transceivers using reach, power, DOM, and reliability signals

After the first incident cluster, we standardized on modules with explicit optical and diagnostic support and then ran a controlled rollout by module type. We used vendor datasheets to confirm wavelength, reach, connector type, transmit power, and receiver sensitivity. We also used DOM support to detect early drift in optical power and temperature.

Comparison table: common short-reach and long-reach optics

The table below compares representative transceiver families used in the case study. Exact part numbers vary by switch vendor compatibility, but these examples reflect typical spec ranges.

| Module family | Data rate | Wavelength | Reach | Connector / Fiber | Typical optical interface | Temperature range | DOM / diagnostics |

|---|---|---|---|---|---|---|---|

| 10G SR (multimode) | 10G | 850 nm | Up to 300 m (OM3) | LC duplex / OM3 or OM4 | VCSEL with receiver sensitivity tuned for short reach | 0 to 70 C (typical) | Usually supported (SFP+ MSA) |

| 25G SR (SFP28) | 25G | 850 nm | Up to 100 m (OM3) or more (OM4) | LC duplex / OM3 or OM4 | VCSEL with tighter power budget | 0 to 70 C (typical) | Usually supported (SFP28) |

| 10G LR (single-mode) | 10G | 1310 nm | Up to 10 km | LC duplex / OS2 | DFB laser with higher link budget headroom | -5 to 70 C (typical) | Usually supported (SFP+) |

| 40G QSFP+ SR4 | 40G | 850 nm | Up to 150 m (OM3) | MPO / OM3 or OM4 | 4-lane parallel optics | 0 to 70 C (typical) | Usually supported (QSFP+) |

Examples of real-world compatible optics families include SFP-10G-SR and SFP-10G-LR style modules from major vendors, and third-party options such as FS.com short-reach and long-reach lines (e.g., FS.com SFP-10GSR-85 for 10G SR with OM3/OM4 targeting). For part-number-level validation, always check your switch’s compatibility matrix and the module’s compliance to the relevant MSA. For baseline module definitions, see IETF RFC 6176 for transceiver management concepts and IEEE 802.3 for PHY behavior.

Reliability signals we actually used

We did not treat MTBF as a guarantee. Instead, we required three practical signals before approving a module family for production:

- DOM alarms and thresholds that let us detect “weak but not dead” optics: TX bias current drift, received power trending, and temperature excursions.

- Documented compliance to the applicable MSA electrical interface and wavelength class so the host does not run into marginal timing or calibration behavior.

- Thermal rating aligned to our worst-case rack conditions, not just room-average temperature.

Pro Tip: In field incidents, the fastest predictor of an imminent module failure is often not “link down,” but a steady drift in received optical power from DOM over several days. If you log RX power hourly and correlate it with CRC spikes, you can catch a contamination or aging trend before the module crosses the host’s hard error threshold.

Implementation steps: how we reduced fiber module failure rate during rollout

We approached the rollout like a reliability experiment, not a one-time procurement decision. The goal was to reduce the fiber module failure rate by eliminating avoidable stressors and by making early detection operationally actionable.

pre-deployment validation with optics and DOM baselining

Before installing any module batches, we captured DOM baselines: TX power, RX power, module temperature, and vendor-reported laser bias current. We then verified link stability under load by running traffic for 60 minutes with no link flap requirement and monitoring CRC counters every 5 minutes.

fiber cleaning and connector inspection standardization

We implemented a strict cleaning workflow: inspection with a handheld microscope, then cleaning with lint-free pre-saturated swabs and a final dry pass where appropriate. In one failure wave, the root cause was not the module itself but a dirty LC face that created intermittent reflections; the module “worked” until thermal cycling changed the coupling efficiency.

thermal mitigation and airflow correction

When we found hot spots above 38 C, we corrected airflow by fully seating cable management panels and restoring blanking panels. We also ensured module cages had unobstructed airflow. After mitigation, the average module temperature dropped by 2 to 4 C, which reduced DOM temperature excursion events.

controlled replacement policy using observed failure patterns

Instead of replacing “random” modules after each incident, we tracked failure events by rack row, module batch, and fiber run. We then prioritized replacements for modules attached to specific fiber segments that had elevated CRC or RX power drift. This reduced repeat failures because the underlying fiber segment problem was addressed once.

Image: A reliability workflow diagram for predicting failure using DOM and traffic telemetry.

Measured results: what changed in the case deployment

After the standardization and rollout controls, we tracked failure events for the next quarter. The baseline period included mixed modules and inconsistent cleaning practices; the improvement period included standardized optics validation, DOM logging, and airflow corrections.

Failure rate outcomes

In the initial baseline, we saw 18 failure events across 920 installed optics over 10 weeks. That is an event rate of approximately 1.97% over the period or about ~0.11 failures per module-month when normalized. After controls, we recorded 7 failure events across 980 installed optics over 12 weeks, or roughly 0.71% across the period, equivalent to ~0.04 failures per module-month.

In other words, the field failure rate dropped by about 64% after addressing fiber cleanliness and thermal stress, while also improving detection via DOM. Importantly, the remaining failures were more “true failures” (module not recognized, optics dead) rather than transient link drops due to marginal optical power.

Operational impact

Mean time to repair decreased because we could identify “degrading” optics before full outage. During the improvement window, the average repair time for an optics-related incident fell from 42 minutes to 26 minutes, mainly because DOM trends pointed to the likely module or fiber segment.

Selection criteria checklist: how engineers choose modules without underestimating failure

Use this ordered checklist when selecting transceivers and predicting fiber module failure rate for your environment. The goal is to avoid matching “spec sheet reach” while ignoring the stressors that dominate field failures.

- Distance and optical budget: verify reach against your fiber type (OM3 vs OM4 vs OS2), connector count, and estimated splice loss.

- Data rate and optics class: ensure the module family matches your switch port speed and gearbox (SFP+ vs SFP28 vs QSFP28 vs QSFP+).

- Switch compatibility: confirm the exact part number is supported by the host switch and firmware version.

- DOM support and alarm visibility: require readable TX/RX power, temperature, and bias current with consistent units across vendors.

- Operating temperature range: pick temperature class that covers your worst-case measured rack inlet or module temperature.

- Vendor lock-in risk: weigh OEM modules with known MTBF claims against third-party modules with transparent diagnostics and documented compliance.

- Maintenance workflow fit: ensure your team can clean and inspect connectors to the level required for your optics class.

When possible, validate with a pilot: install 5% to 10% of modules, log DOM and interface errors for several weeks, then decide on scale. This is the fastest path to a credible estimate of your own fiber module failure rate rather than relying on generic industry averages.

Common pitfalls and troubleshooting tips that drive failure events

Below are frequent mistakes that increase fiber module failure rate and how to fix them. Each item includes root cause and a field-ready solution.

Pitfall 1: ignoring connector contamination until after link flaps

Root cause: dirty LC or MPO faces create intermittent reflections and coupling loss that varies with temperature and vibration. The module may pass initial tests but fail under sustained load.

Solution: inspect with a microscope before installation, clean using an optics-safe workflow, and re-check RX power after each cleaning action. Standardize cleaning tools across teams.

Pitfall 2: assuming MTBF equals “no failures” over the deployment window

Root cause: MTBF is not a timeline guarantee, and field failures often include handling damage, connector events, and early-life issues not captured by simple exponential models.

Solution: track real failure events per module-month, separate “service impact” from “electrical failure,” and use DOM drift to identify pre-failure behavior.

Pitfall 3: mismatched module temperature class for hot racks

Root cause: modules with a limited temperature rating are more likely to experience laser bias drift, leading to reduced receiver margin and increased CRC/BER.

Solution: measure rack inlet and module temperatures during peak operations, then select modules whose rated operating range covers those measurements with headroom.

Pitfall 4: overlooking switch firmware and optics calibration behavior

Root cause: host firmware can change thresholds, DOM interpretation, or link training behavior. A module that worked on one firmware build may behave differently on another.

Solution: record firmware versions during incidents, test new firmware with a pilot group, and confirm that DOM alarm thresholds are consistent.

Image: A visual model of how localized thermal or fiber issues create correlated failure clusters.

Cost and ROI note: balancing OEM pricing, third-party options, and total cost of ownership

Pricing varies by speed and reach, but for planning purposes, a short-reach 10G or 25G optics module often lands in a range that can differ by a factor of two between OEM and third-party suppliers. In many deployments, the real TCO driver is not purchase price alone but downtime cost, labor hours, and the cost of repeated truck rolls or maintenance windows.

OEM modules may cost more, but they typically offer predictable compatibility with switch firmware and consistent DOM behavior. Third-party modules can reduce CapEx, yet they can increase operational risk if diagnostics differ or if compatibility lists lag behind. If your observed fiber module failure rate is above your tolerance, the ROI of cheaper modules can collapse due to increased incident volume.

A pragmatic approach: use third-party modules only after a pilot validates DOM stability, error-rate behavior, and compatibility. Then standardize on a small set of approved part numbers to reduce variability and simplify troubleshooting.

FAQ

How do I estimate fiber module failure rate for my data center?

Start by logging module-level events: link down duration, CRC/BER trends, and DOM alarm triggers. Normalize failures by module-months (failures divided by installed optics over time) to get a field-based estimate. Then compare that to MTBF-based expectations to understand whether your environment is dominated by handling and fiber issues versus true component aging.

Do MTBF numbers from vendor datasheets reflect what we will see?

Not directly. MTBF is derived from accelerated testing and may assume an ideal use profile. Field failure probability depends heavily on temperature, cleaning quality, connector geometry, and host switch behavior, so you need a pilot and telemetry to calibrate your local failure rate.

What DOM metrics are most useful for predicting failure?

RX optical power trending, TX bias current drift, and module temperature excursions are the most actionable. When RX power steadily declines while CRC counts rise, you are often seeing either connector contamination or aging that reduces optical margin.

What is the most common cause of repeated transceiver outages?

Often it is not the module but the fiber segment and connectors feeding it. Dirty LC/MPO faces, marginal cleaning, or a specific patch panel run can create correlated failures across multiple optics installed on the same path.

Should we standardize on one vendor to reduce risk?

Standardization helps reduce diagnostic variability and simplifies troubleshooting, which can reduce operational downtime even if raw component failure rates are similar. However, you should still validate compatibility with your switch models and firmware versions rather than assuming universal interchangeability.

How can we lower fiber module failure rate without replacing everything?

Focus on the top stressors: airflow and temperature, connector cleaning and inspection, and DOM-based early detection workflows. A targeted replacement of modules attached to the worst fiber runs usually delivers faster ROI than broad bulk swapping.

Reliability planning is about closing the gap between “MTBF on paper” and “failure rate in your racks.” Next, run a pilot with DOM logging and connector inspection discipline, then use your measured module-month failure events to set procurement and maintenance thresholds using fiber optics reliability and DOM monitoring.

Expert bio: I have supported field deployments of SFP+/SFP28 and QSFP28 optics across leaf-spine and storage networks, focusing on telemetry-driven reliability and rapid incident containment. I help teams validate fiber module failure rate hypotheses with pilots, error counters, and DOM-based early warning.