Disaggregated Optics Transceivers in Open Line Systems: Fit

When an enterprise moves toward disaggregated optics, the hard part is rarely “can it light fiber?” It is aligning transceiver behavior, optical budget, and control-plane expectations across an open line system without breaking interoperability. This article helps network and infrastructure leaders evaluate transceivers for open line networking while balancing operational risk, budget, and measurable ROI. It is written for engineers who have to pass link validation, manage optics inventory, and troubleshoot real field failures.

What disaggregated optics means inside an open line system

In disaggregated optical architectures, the optical transport function is separated from the packet switching function. Practically, that means the line side (optical transceivers, mux/demux, and optical monitoring) can be treated as a pluggable resource, while routers and switches focus on packet processing. In an open line system, the goal is to avoid a tightly coupled “vendor bundle” where the transceiver, line terminal, and monitoring stack are inseparable.

Most open line deployments still rely on standards-based electrical interfaces for optics control, typically QSFP28/QSFP-DD management via I2C and standardized digital diagnostics (DOM). The optical performance is then governed by IEEE 802.3 physical-layer requirements and vendor datasheet limits. IEEE 802.3 defines link reach targets for common Ethernet rates and optics classes, while vendor datasheets define receiver sensitivity, transmitter power, and safety compliance.

Control and governance boundaries you must define up front

From an enterprise architecture perspective, you need explicit governance for three boundaries: (1) optics compatibility with switch line cards, (2) optical budget policy for each fiber plant segment, and (3) monitoring and telemetry requirements for operational assurance. If you do not standardize these boundaries, “open” becomes a procurement slogan rather than a maintainable engineering model. Field teams then inherit ambiguous failure modes such as “link comes up intermittently” or “DOM readings differ by vendor.”

Pro Tip: In open line systems, the most common operational surprise is not optical power. It is DOM interpretation drift: different vendors map temperature, bias current, and received power into slightly different scaling or units. If your automation thresholds assume one vendor’s behavior, you can trigger false maintenance events or miss true degradation.

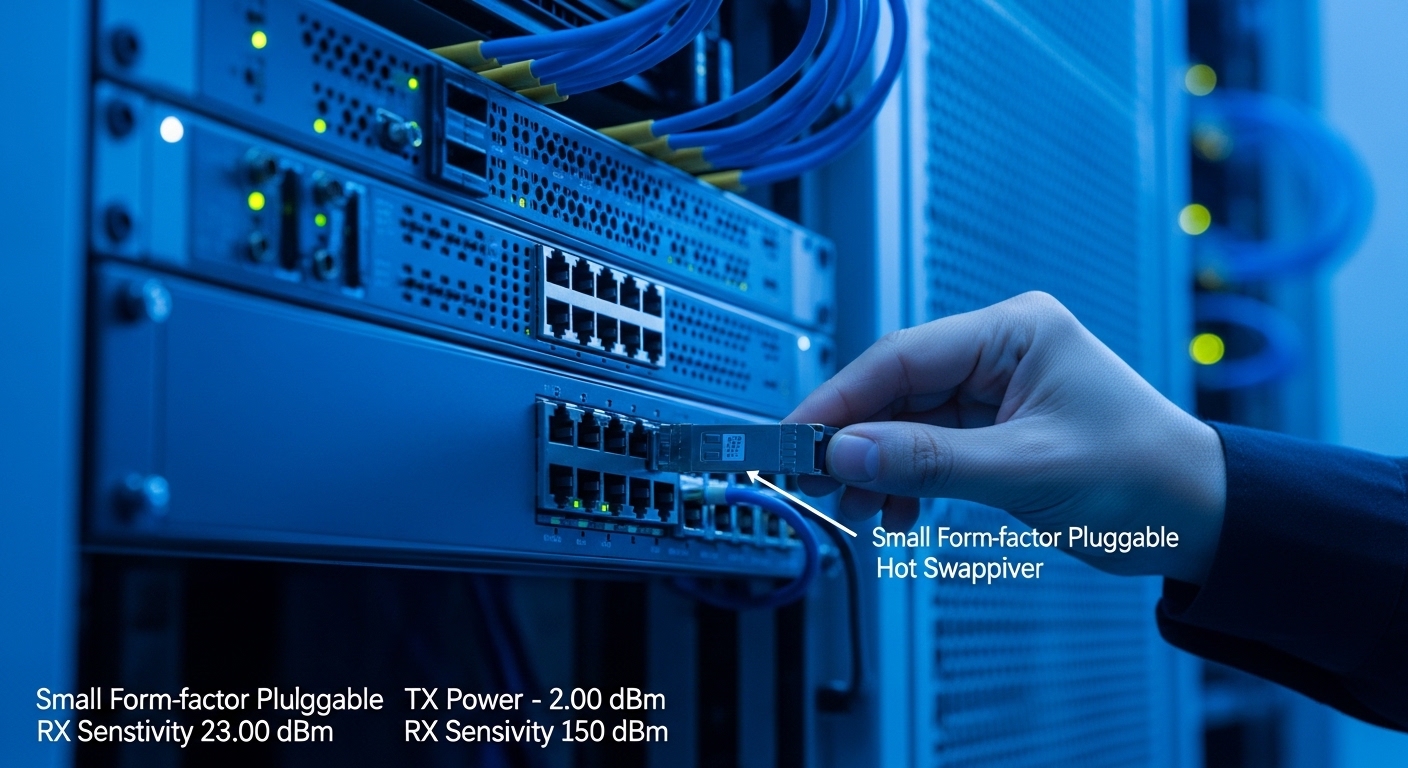

Transceiver selection: specs that actually affect link success

Selection should start with the physical-layer target (data rate and modulation), then move to reach and optics class, and only then to form factor. For Ethernet links, IEEE 802.3 defines optical compliance classes for common distances, but your real reach is limited by fiber attenuation, connector loss, splice loss, and the transceiver’s specified power and receiver sensitivity. In disaggregated optics, you also need to verify that the transceiver’s electrical and management interface works with your line terminal.

Key spec fields to compare across vendors

Engineers routinely compare the same small set of numbers because they directly determine whether a link passes margin requirements. These include wavelength, nominal transmit power, receiver sensitivity, OMA or equivalent optical power metrics, connector type (LC/SC), and temperature range. For diagnostics, confirm DOM support and that your platform accepts the DOM vendor’s diagnostic register layout.

| Parameter | 10G SR (Example: 850 nm) | 10G LR (Example: 1310 nm) | 25G SR (Example: 850 nm) |

|---|---|---|---|

| Target data rate | 10G Ethernet | 10G Ethernet | 25G Ethernet |

| Nominal wavelength | 850 nm multimode | 1310 nm singlemode | 850 nm multimode |

| Typical reach (class) | ~300 m over OM3/OM4 (depends on OMA) | ~10 km over SMF (depends on budget) | ~100 m to 400 m over OM3/OM4 (depends on class) |

| Connector | LC (common) | LC (common) | LC (common) |

| DOM / diagnostics | Supported via I2C (vendor dependent) | Supported via I2C (vendor dependent) | Supported via I2C (vendor dependent) |

| Operating temperature | Commonly 0 to 70 C for standard | Commonly -5 to 70 C for some enterprise modules | Commonly 0 to 70 C for standard |

| Compatibility risk | Medium: platform acceptance differs by vendor | Lower if platform supports standard optics profile | High: 25G optics profiles vary across line cards |

Concrete examples you may encounter in procurement include Cisco-branded and compatible optics such as Cisco SFP-10G-SR for multimode, or third-party modules like Finisar FTLX8571D3BCL (10G SR family) and FS.com SFP-10GSR-85 (10G SR, 850 nm). Treat these as reference points, not guarantees: your switch vendor’s compatibility matrix and your line terminal’s optics profile enforcement determine whether the module is accepted.

For authoritative baseline reach and compliance concepts, review IEEE 802.3 physical-layer clauses for the relevant Ethernet rates and optics types. Also cross-check vendor datasheets for actual optical power and receiver sensitivity values. [Source: IEEE 802.3] [[EXT:https://standards.ieee.org/standard/802_3]]

Real-world deployment scenario: open line in a 48 ToR leaf-spine

Consider a 3-tier data center leaf-spine design with 48-port 10G ToR switches feeding a spine using 100G uplinks. The facility has OM4 multimode in server and ToR corridors and SMF for cross-row links. The architecture team chooses disaggregated optics so that optical reach and form factor can be standardized across multiple switch models, while line terminals are kept modular.

In this scenario, the field team uses 10G SR optics for ToR-to-edge aggregation within the OM4 reach envelope, and 10G LR or 25G/100G equivalents for longer spans on SMF. They validate each link with a measured optical budget that includes fiber attenuation plus connector and splice losses; a common practice is to allocate 0.75 dB to a typical LC connector pair and 0.2 dB per splice as a starting point, then replace assumptions with measured results where possible. After rollout, engineers use DOM telemetry to track per-module bias current and received power, ensuring that links remain within a configured margin window before degradation becomes visible as CRC errors.

The ROI comes from standardizing optics SKUs and reducing “truck rolls” caused by mismatched transceiver profiles during switch refreshes. However, the same team also discovers that not all compatible optics expose identical DOM scaling, so automation thresholds need calibration per optics family. The deployment succeeds when the governance layer requires a compatibility test for each optics family against each line terminal platform.

Decision checklist for disaggregated optics in open line deployments

Below is the ordered checklist engineers and IT directors use when selecting disaggregated optics for open line systems. It is structured to prevent late-stage surprises during burn-in and acceptance testing.

- Distance and fiber type: confirm OM3/OM4 grade or SMF type, then map each link to an optics reach class.

- Optical budget margin: compute budget using measured fiber plant losses and vendor receiver sensitivity; require headroom for aging and cleaning variability.

- Switch and line terminal compatibility: verify optics profile acceptance on the exact platform model and software version.

- DOM support and telemetry mapping: confirm digital diagnostic support via I2C and validate units/ranges used by your monitoring system.

- Operating temperature and airflow: align module temperature range with actual chassis thermal profiles and fan curves.

- Connector and patching standard: ensure LC/SC polarity and cleaning procedures match your operational work instructions.

- Vendor lock-in risk: evaluate whether the platform enforces “known good” optics and whether firmware updates break compatibility.

- Supply chain and lifecycle: confirm lead times, end-of-life policy, and whether optics families are stable across switch refresh cycles.

Common mistakes and troubleshooting in disaggregated optics

Even well-designed disaggregated optics programs fail when teams underestimate physical-layer realities or automation assumptions. The pitfalls below are common in enterprise open line systems, with root cause and practical remediation.

Link flaps due to insufficient optical margin

Root cause: the computed budget used optimistic assumptions for connector and splice loss, or the fiber run includes degraded patches. Aging and cleaning variability reduce received power until the link negotiates unstable behavior.

Solution: remeasure with an optical power meter and, if possible, an OTDR; clean connectors with verified procedures; replace worst patch cords; then adjust thresholds based on measured DOM received power trends.

“Module not recognized” after software upgrade

Root cause: the line terminal firmware changes optics profile enforcement, or the platform expects specific DOM register behavior. Compatible modules may fail acceptance despite meeting optical specs.

Solution: validate optics families against the target software release during a controlled lab test; pin versions when necessary; update compatibility matrices and require regression testing for optics acceptance.

False alarms from mismatched DOM thresholds

Root cause: monitoring scripts treat vendor-specific diagnostic scaling as if it were uniform. Temperature rise or bias current values may be reported in a different range or interpret received power differently.

Solution: calibrate monitoring by capturing baseline DOM telemetry for each optics family under known conditions; set thresholds using percentiles of stable behavior rather than absolute numbers from a single vendor.

Receiver saturation from overly strong transmit power

Root cause: short links with low-loss jumpers can produce received power above the receiver’s linearity range, causing errors that look like noise.

Solution: use calibrated attenuators in test, shorten or adjust patching, or choose an optics variant with appropriate power class; verify with received power measurements from DOM and optical tools.

Cost and ROI: where disaggregated optics pays off and where it does not

In most enterprises, optics cost is not just the module price. Total cost of ownership includes acceptance testing labor, inventory complexity, spares strategy, and the operational time spent troubleshooting. OEM optics are often priced higher, but they reduce compatibility risk because they are validated with the platform’s intended optics profile.

Third-party modules typically offer a meaningful unit cost reduction, but the ROI depends on governance maturity. In a mature environment with a compatibility lab process, teams can reduce optics spend while maintaining uptime by standardizing on a limited set of validated optics families. In immature environments, the “savings” can evaporate quickly due to truck rolls, extended burn-in cycles, and monitoring rework.

Realistic pricing varies by rate and reach class, but for budgeting: 10G SR modules often fall in the mid to high tens of dollars each for reputable third-party channels, while OEM can be higher; 25G and 100G modules can be several times that, especially for QSFP-DD and long-reach variants. TCO also includes power and cooling impact: optics power draw is generally modest compared to switch ASICs, but swapping to more efficient optics can reduce heat at the margins in high-density line cards.

From a governance standpoint, require a documented acceptance workflow that includes optics insertion tests, DOM telemetry validation, and link error-rate checks. This approach turns disaggregated optics from a procurement experiment into a controlled engineering program.

FAQ: disaggregated optics in open line systems

How do I verify that disaggregated optics are compatible with my switch line terminal?

Start with the vendor compatibility matrix for your exact switch model and software version. Then run a lab acceptance test that includes link bring-up, DOM telemetry capture, and a sustained error-rate check. If your monitoring depends on DOM scaling, validate that mapping too.

Do disaggregated optics still follow IEEE 802.3 physical-layer requirements?

Yes, the optics types are generally designed to meet IEEE 802.3-defined electrical and optical performance targets for the relevant Ethernet rates and reach classes. However, “meets spec” does not guarantee platform acceptance, because optics profile enforcement and DOM behavior can differ by vendor and firmware.

What is the biggest operational risk when moving to disaggregated optics?

The biggest risk is not optical power alone; it is the combination of interoperability and telemetry assumptions. Mismatched DOM interpretation can cause automation errors, while firmware updates can change optics acceptance behavior. Governance and regression testing are the mitigations.

Should we standardize on OEM optics or third-party modules?

Many enterprises use a hybrid approach: OEM for the most critical or hardest-to-validate optics classes, and third-party for well-governed, repeatedly validated families. The decision should be based on measured compatibility success rates and your ability to run consistent acceptance testing.

How do I handle monitoring and alarms with multiple optics vendors?

Calibrate thresholds per optics family. Capture baseline DOM values under stable conditions, then set alerting based on statistically derived margins rather than absolute constants from a single vendor. Ensure your runbooks include vendor-specific interpretation notes.

What troubleshooting step should happen first for a failing link?

Begin with fiber inspection and cleaning, then measure optical power and check DOM received power. Only after physical-layer verification should you suspect firmware compatibility or transceiver defects. This order prevents wasting time on software when the root cause is a contaminated connector.

Disaggregated optics can improve flexibility and reduce long-term procurement friction in open line systems, but only when you treat transceivers as governed, testable components rather than interchangeable accessories. If you are planning the next phase, review related topic for a practical approach to building an optics acceptance and monitoring standard.

Author bio: IT infrastructure director with hands-on experience validating Ethernet optics interoperability across leaf-spine data centers and open line optical shelves. Focus areas include optics governance, telemetry reliability, and measurable ROI from standardized spares and acceptance testing.