Long-haul links fail in ways that look mysterious until you measure the right impairment: chromatic dispersion. If you are planning a 40G or 100G route across installed single-mode fiber, this article compares practical chromatic dispersion transceiver approaches so you can hit reach targets without burning budget. It helps network engineers, field technicians, and data center operations teams validate optics against IEEE 802.3 requirements and real vendor link budgets.

What “chromatic dispersion transceiver” means in real links

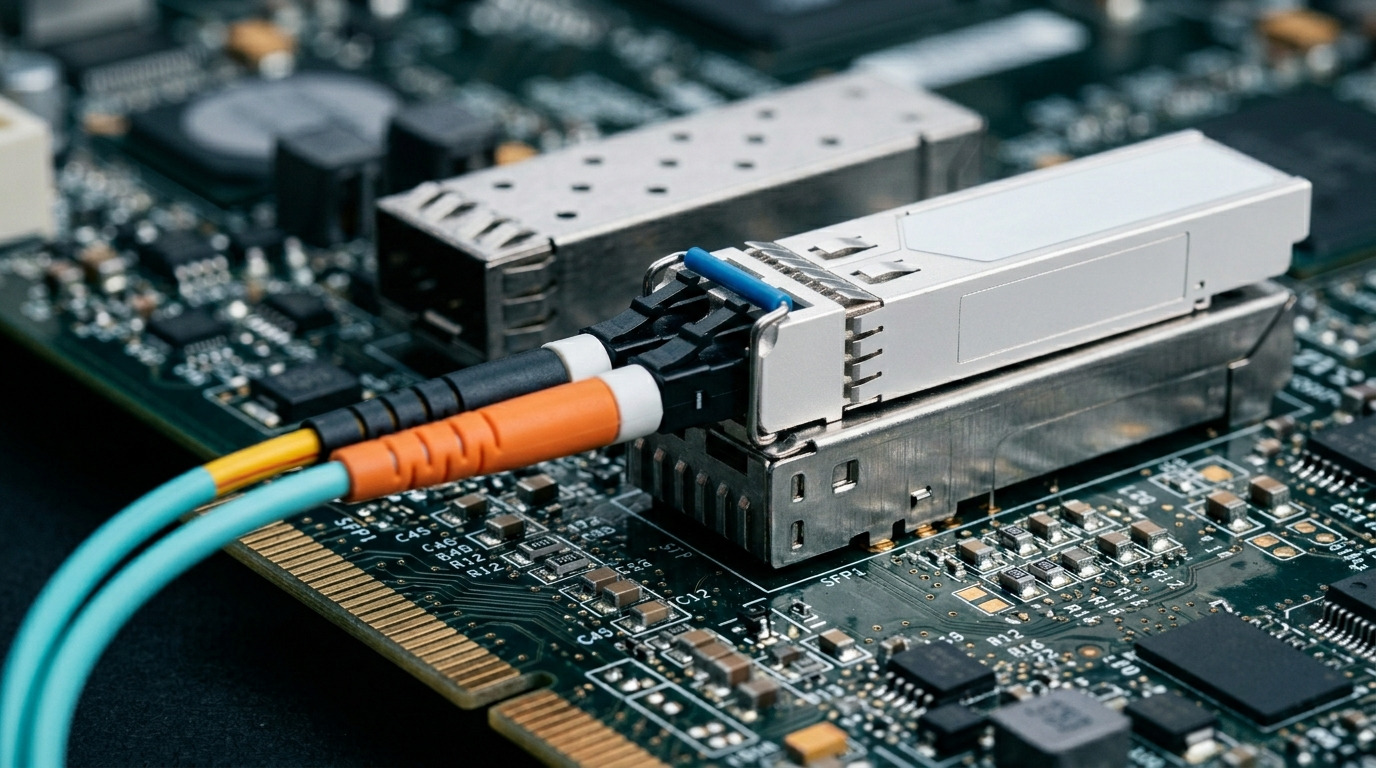

In optics, “chromatic dispersion transceiver” is commonly used to describe a module type selected (or designed) to mitigate dispersion effects that spread pulses as light travels. In practice, the key variable is not the label on the cage; it is the optical strategy: pre-compensation, dispersion-compensating optics, or a wavelength and encoding choice that fits the fiber plant. For long-haul deployments, your selection should be driven by the fiber’s dispersion coefficient (often around 16 to 17 ps/nm/km at 1550 nm for standard SMF) and the target bit rate.

How dispersion impacts reach (and why transceivers matter)

Chromatic dispersion converts pulse energy into a wider time spread, which increases inter-symbol interference and raises the BER. At 10G you can often tolerate more dispersion than at 100G, but the math is unforgiving at higher symbol rates. Many modern long-reach transceivers rely on vendor-tuned transmitter output and receiver DSP equalization; some also incorporate dispersion compensation to extend reach on the same fiber.

From a field perspective, you confirm impact with a combination of module DOM data, switch optics diagnostics, and—when available—fiber OTDR plus a dispersion estimate from your splice maps. Vendors publish link budgets that include dispersion limits, and those limits are usually the deciding factor when you are operating near maximum reach.

Pro Tip: If your switch reports “link degraded” but power levels are within range, pull the module’s DOM metrics and check the receiver signal quality indicators (often called RX power, bias current, and sometimes an internal “CD tolerance” or DSP lock status). A dispersion-limited link frequently looks like a marginal signal rather than an obvious power failure.

Performance comparison: dispersion tolerance, reach, and optics class

This head-to-head compares typical long-haul options engineers choose when dispersion is the limiting impairment. The exact reach depends on the fiber type, patch panel losses, and whether the module is tuned for a specific wavelength window.

| Option (typical) | Wavelength | Reach class | Dispersion handling | Connector | Data rate | Typical operating temp |

|---|---|---|---|---|---|---|

| Coherent long-haul transceiver (CD-tolerant) | 1550 nm band | 80 km to 120+ km (system dependent) | DSP-based dispersion compensation | LC (system dependent) | 40G/100G/200G+ | 0C to 70C (varies by vendor) |

| Direct-detect long-haul with dispersion-aware design | 1310 nm or 1550 nm (model dependent) | 10 km to 80 km (model dependent) | Improved CD tolerance; sometimes pre-emphasis | LC | 10G/25G/40G | -5C to 70C (varies by vendor) |

| Fixed-wavelength dispersion-optimized direct-detect | 1550 nm fixed | 40 km to 120 km (model dependent) | Pre-compensation or dispersion-compensating optics | LC | 10G/40G (varies) | 0C to 70C |

If you need a concrete example of part numbers that often appear in real procurement lists: Cisco and other OEMs commonly use standards-aligned optics families such as 10Gbase-LR and 40Gbase-LR4 equivalents, while third-party vendors sell compatible modules like Finisar FTLX8571D3BCL (10G LR) or FS.com SFP-10GSR-85 (short reach, for contrast). For dispersion-focused long-haul, coherent modules are frequently the most robust option, while direct-detect long-haul modules are more cost-effective when your fiber plant stays within the published CD tolerance.

Direct-detect vs coherent: the practical trade

Direct-detect transceivers extract intensity only, so the receiver relies heavily on DSP equalization and the optics’ dispersion tolerance window. Coherent receivers recover phase and amplitude, enabling more powerful digital compensation for dispersion and polarization effects. That is why coherent solutions often win on reach and resilience, but they typically cost more and require compatible transceiver infrastructure.

When you evaluate “chromatic dispersion transceiver” options for long-haul, treat coherent as the “dispersion insurance policy” and direct-detect as the “budget-optimized fit” if your link is well characterized.

Cost and ROI: OEM vs compatible modules under dispersion stress

Budget pressure is real: procurement managers care about unit price, while operations teams care about failure rate, support timelines, and mean time to repair. In dispersion-sensitive links, the ROI calculation must include the cost of rework and truck rolls if you choose the wrong optical class.

Typical price ranges and what drives total cost

While pricing varies by region and volume, long-haul transceivers typically land in these bands: compatible direct-detect long-haul modules can be priced roughly in the $100 to $500 range per optics, while OEM long-haul modules are often $300 to $1,500 depending on speed and vendor support. Coherent transceivers and transceiver systems usually cost far more—often $2,000 to $10,000+ per end—plus the platform requirement.

TCO is not only the module cost. Consider: (1) spares stocking strategy, (2) licensing or compatibility constraints, (3) switch vendor optics policy, and (4) power consumption. For example, coherent optics can draw more power than direct-detect, affecting rack-level thermal design.

Compatibility and standards checks: avoiding “it lights up but fails”

A chromatic dispersion transceiver must match not only the fiber type and connector, but also the exact electrical and optical expectations of the host. IEEE 802.3 defines optical link behavior and management interfaces, but vendor implementations of optics diagnostics and optical power thresholds can differ.

Decision checklist engineers actually use

- Distance and dispersion budget: confirm the fiber’s dispersion coefficient and your end-to-end reach target; compare against the vendor’s maximum CD-limited reach.

- Data rate and modulation constraints: ensure the module supports the exact line rate and coding (for example, 64b/66b variants at certain speeds) required by your switch.

- Switch compatibility: verify the optics are on the switch’s supported optics list or pass the platform’s optical diagnostics thresholds.

- DOM support and thresholds: confirm the module provides DOM readings your platform can interpret (RX/TX power, bias current, temperature).

- Operating temperature: check the module’s temperature range against your site HVAC profile; long-haul remote cabinets often run hotter.

- Vendor lock-in risk: if you are using an OEM “optics policy,” evaluate whether third-party optics can be auto-recognized and whether they remain supported after firmware updates.

Where IEEE and vendor documentation fit

Start with IEEE 802.3 for the transceiver electrical behavior and optical class requirements, then use vendor datasheets for the CD-limited reach and receiver sensitivity. For link budgeting, also consult ANSI/TIA guidance on optical cabling practices and measurement workflows where applicable. Reference: IEEE 802.3 overview. Reference: ANSI/TIA standards portal.

Common mistakes and troubleshooting: how dispersion failures look

Dispersion-related problems often masquerade as generic “bad optics” symptoms. Below are frequent failure modes field teams see, with root causes and fixes.

Power is fine, but BER is high

Root cause: the link is within RX power range but outside the module’s chromatic dispersion tolerance window for the specific fiber length and dispersion profile. This can happen when the fiber type is misidentified or when patch cord length changes during a move.

Solution: verify the actual fiber route length, confirm the wavelength (1310 vs 1550), and compare against the vendor’s CD-limited reach. If you are near the limit, switch to a dispersion-optimized module class or coherent optics.

Mixed optics across a link segment

Root cause: one side uses a different long-haul class or wavelength plan than the other (for example, LR vs ER variants, or a module that expects a specific channel grid). The link can “come up” but remain unstable due to mismatch in optics timing and equalization capability.

Solution: match module part numbers and optics classes end-to-end, and confirm wavelength and lane mapping. Use DOM to confirm both sides report expected TX wavelength and bias current.

Temperature drift in remote cabinets

Root cause: elevated module temperature changes laser characteristics and can push the receiver sensitivity or DSP operating margin outside tolerance, especially when dispersion is already close to the edge.

Solution: check the site temperature logs and ensure the optics temperature range is respected. Improve airflow or add cabinet ventilation; then retest BER after stabilization.

Dirty connectors and microbends that mimic dispersion

Root cause: contamination reduces effective optical power and can also distort the received signal quality metrics, which may look similar to dispersion-induced penalties.

Solution: follow a strict cleaning workflow for LC ends (inspect with a scope, clean with approved wipes and solvent as required), then re-measure. If possible, verify with an OTDR to rule out microbend hotspots.

Decision matrix: which chromatic dispersion transceiver option fits your job

Use this matrix to align engineering constraints (reach and dispersion) with operational constraints (compatibility and cost).

| Reader profile | Primary goal | Best option | Why | Watch-outs |

|---|---|---|---|---|

| Cost-sensitive enterprise with known fiber | Meet distance within budget | Direct-detect long-haul, dispersion-aware model | Lower unit cost, simpler deployment | Must verify CD-limited reach and clean connector hygiene |

| Carrier-style long-haul with variable plant | High resilience under dispersion variation | Coherent chromatic dispersion transceiver solution | Stronger DSP compensation for dispersion and impairments | Higher cost and platform compatibility requirements |

| Data center interconnect with strict compatibility | Predictable bring-up and diagnostics | Vendor-supported direct-detect long-haul (matched part numbers) | Better optics policy alignment and DOM behavior | Firmware updates can change acceptance thresholds |

Which Option Should You Choose?

If you have a characterized fiber route and your measured or estimated dispersion budget stays comfortably inside the vendor’s CD-limited reach, choose a dispersion-aware direct-detect chromatic dispersion transceiver to minimize cost and keep operations simple. If your plant is uncertain, your link is near the edge, or you are dealing with frequent re-patching and length changes, choose a coherent solution for maximum dispersion tolerance and operational stability.

Next step: map your link budget end-to-end and document your constraints using chromatic dispersion mitigation strategies so procurement and engineering decisions stay aligned.

Real-world deployment scenario: dispersion at the edge of a 40G upgrade

In a 3-tier data center leaf-spine topology with 48-port 10G ToR switches, the team planned a 40G uplink between two sites connected by a single-mode fiber bundle. The installed fiber length was 32 km with splice loss under 1.5 dB per 10 km, and the patch panel jumpers were updated during a cabinet refresh, adding roughly 3 dB of extra insertion loss. The initial 40G direct-detect optics powered up and showed nominal RX power, but BER alarms triggered intermittently during peak traffic.

DOM readings showed stable temperature and bias currents, which pointed away from hardware faults. The root cause was dispersion margin: the vendor’s datasheet assumed a specific dispersion profile and jumper length, and the new patch cords effectively pushed the link outside CD tolerance. After switching to a dispersion-optimized chromatic dispersion transceiver model designed for the same wavelength plan (and confirming connector cleanliness), BER stabilized without changing switch configuration.

FAQ

How do I confirm chromatic dispersion is the real issue, not just low power?

Start with RX/TX power and DOM health indicators; if power is within spec but BER or FEC counters worsen, dispersion or equalization limits are likely. Then compare actual fiber length and wavelength plan against the vendor’s CD-limited reach and run a connector inspection to rule out contamination.

Are chromatic dispersion transceivers compatible across different switch brands?

Often they are physically compatible, but operational compatibility depends on optics policy, DOM interpretation, and threshold behavior. Always validate with your specific switch model’s supported optics list and test bring-up in a controlled environment before rolling out widely.

Should I choose direct-detect or coherent for long-haul dispersion?

Choose direct-detect when your fiber plant is well characterized and you are within the published CD tolerance. Choose coherent when you need maximum tolerance for dispersion variation and other impairments, or when you cannot confidently characterize the route.

What fiber wavelength should I plan for when dispersion is the limiting factor?

Many long-haul dispersion-sensitive designs target the 1550 nm window because attenuation is lower and system architectures can better manage dispersion. Still, you must match the module wavelength and channel plan to your network design and verify vendor guidance for your exact use case.

Do third-party chromatic dispersion transceivers reduce TCO or increase risk?

They can reduce unit cost, but risk comes from compatibility gaps, firmware interactions, and support response times. For dispersion-critical links, the ROI improves when you standardize on a tested compatible model, keep spares, and verify DOM behavior with your switch platform.

What is the fastest troubleshooting workflow when a link is unstable after optics replacement?

Validate module DOM health, confirm both ends use matched part numbers and wavelength plans, then inspect and clean connectors. If symptoms persist, re-check dispersion budget using actual fiber length and patch cord inventory, and measure BER/FEC counters under controlled traffic.

Author bio: I have deployed and troubleshot long-haul optics in enterprise and carrier-adjacent environments, focusing on dispersion-limited reach, DOM diagnostics, and field-ready fiber verification. My work emphasizes measurable link budgets and operational checklists that reduce rework after cutovers.