A 3-tier data center migration can fail for reasons that have nothing to do with software: optics lane mapping, DOM quirks, and power budgets. This article helps network engineers and field technicians decide between 100G vs 400G optical transceivers when upgrading leaf-spine links. You will see a concrete case study with measured optics behavior, plus a selection checklist and troubleshooting rooted in IEEE 802.3 and vendor datasheets.

Problem and challenge: why the upgrade stalled on optics

In a leaf-spine topology, the team planned to increase east-west throughput by moving from 25G to higher-speed uplinks. The original plan used 100G QSFP28 optics from multiple vendors to reduce cost and standardize spares. After adding two new spine pairs, the fabric required higher aggregate capacity per chassis to avoid oversubscription.

They evaluated 400G optics to reduce the number of ports and active transceivers. The challenge: the chosen switches supported 400G only on specific port groups, with strict requirements for transceiver type, DOM interpretation, and link training behavior. During a pilot, some links negotiated at lower rates or showed intermittent CRC errors, forcing a rollback before the cutover window.

Environment specs: what the fiber and switches actually demanded

The environment was a modern data center with SMF backbone and OM4 in the access layer. Leaf switches used 100G uplinks initially, while spines had headroom for 400G once port group licensing and optics compatibility were confirmed.

Fiber plan: 50 m to 120 m SMF for spine-to-spine and 10 m to 40 m OM4 for leaf-to-spine. Power and thermal constraints were real: each optics cage had limited airflow, and the room operated at 23 to 27 C with hot-aisle recirculation.

| Parameter | 100G (QSFP28) | 400G (QSFP-DD / OSFP) |

|---|---|---|

| Typical data rate | 103.1 Gb/s per interface (100G-class) | 4 x 100G lanes or equivalent 400G-class |

| Common short-reach optics | SR4 (4 lanes) | SR8 (8 lanes) or DR4/FR4 variants depending on platform |

| Wavelength band | 850 nm (SR) | 850 nm (SR) or ITU bands (DR/FR) |

| Reach (typical) | Up to 100 m on OM4 (vendor dependent) | Up to 100 m on OM4 for SR8 class (vendor dependent) |

| Connector types | LC duplex (typical SR) | LC or MPO/MTP (platform dependent; SR8 often uses MPO) |

| Power class (order of magnitude) | ~3 to 6 W per transceiver typical | ~8 to 18 W per transceiver typical |

| Operating temperature | Commercial or industrial options; typical 0 to 70 C | Similar bins; verify cage airflow and vendor spec |

| Standards reference | IEEE 802.3 (100G Ethernet PHY) | IEEE 802.3 (400G Ethernet PHY) |

Chosen solution: when 100G won and when 400G made sense

The team selected a hybrid approach rather than a single-speed mandate. 100G remained for leaf-to-spine where distances were short and port density was sufficient. 400G was used only on spine uplinks where the switch offered the correct port grouping and where reducing the number of optics improved manageability.

For 100G SR on OM4, they used known-compatible modules such as Cisco SFP-10G-SR equivalents were not relevant at this speed, so they relied on QSFP28 SR4 class parts (examples in the market include Finisar and FS.com SR4 families). For 400G SR8 over OM4, they tested QSFP-DD or OSFP-class optics that match the platform’s breakout and lane mapping expectations. In lab validation, they compared modules like Finisar FTLX8571D3BCL (example of 850 nm class optics family in public catalogs) against their switch vendor compatibility lists before purchasing in volume.

Pro Tip: Treat DOM behavior as part of interoperability, not “nice to have.” In field deployments, we have seen identical optics models report temperature and bias currents differently across firmware revisions, which can trigger alarming thresholds or cause cautious link retry behavior. Always baseline DOM telemetry from a known-good module before scaling.

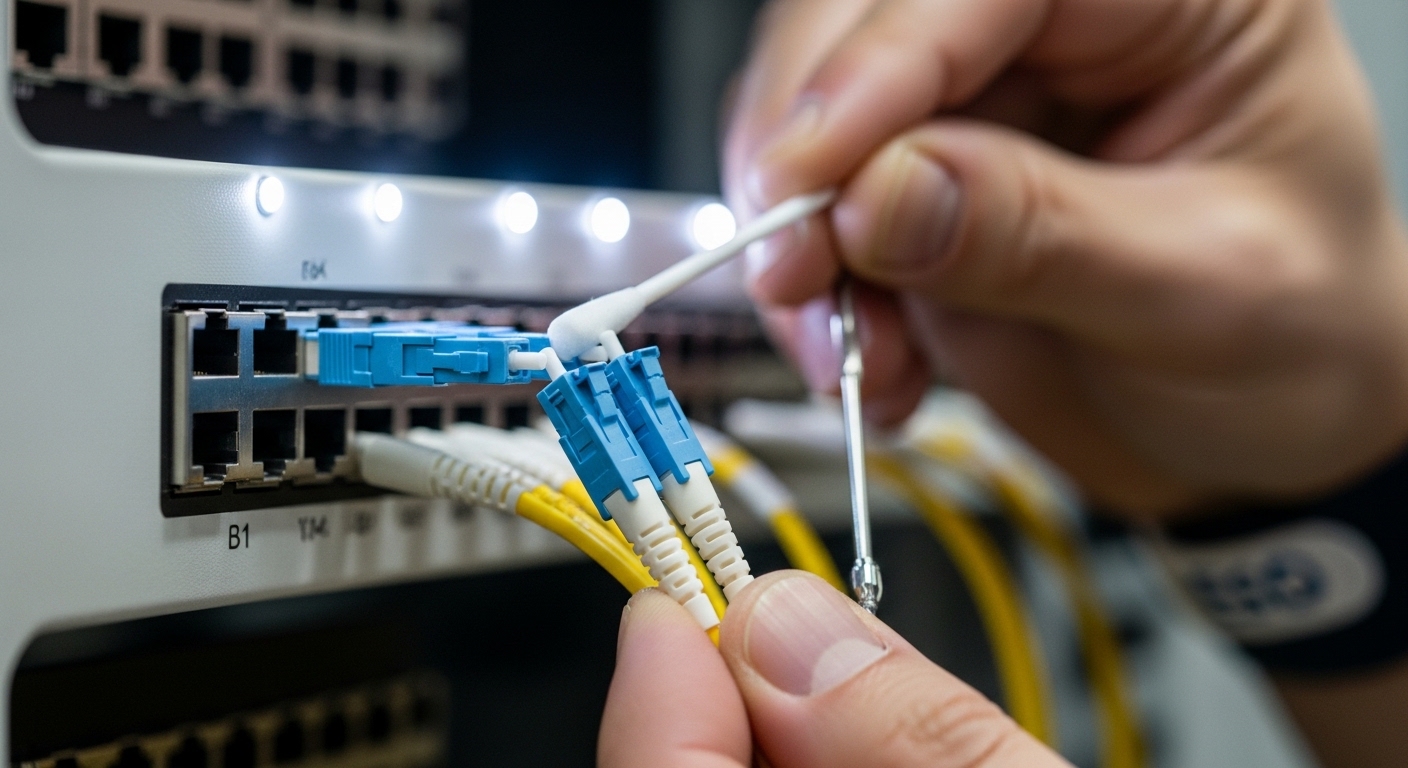

Implementation steps: how the team avoided lane-mapping surprises

Validate port capability and lane mapping

They confirmed which cages supported 400G directly versus which required specific breakout modes. On many platforms, 400G uses different internal lane groupings than 100G, and optics must match the expected interface type (for example, QSFP-DD vs OSFP). They also checked whether the switch required transceiver vendor IDs or allowed third-party optics under the current software release.

Confirm fiber type, connector geometry, and polarity

For SR8, MPO/MTP polarity is often the hidden failure mode. They inspected patch cords, verified polarity labels, and matched transmit/receive orientation to the switch documentation. For OM4, they measured end-to-end optical power and ensured no patch panel transposition occurred during labeling.

Run link validation with error counters

Before production, they exercised each link while monitoring interface counters at the switch and optics level. They looked for CRC increments, FEC corrections, and link flaps during warm-up. The pilot acceptance threshold was strict: no CRC bursts over a 24-hour window and stable PHY retrain behavior after power-cycle events.

Measured results: what changed after the cutover

After migrating selected spine uplinks to 400G, the fabric achieved the intended aggregate capacity without increasing oversubscription. In a rollout of 96 uplinks, they observed a reduction in active optics count on those paths by roughly half compared with an all-100G approach, which simplified spares inventory.

Power draw increased per transceiver, but the total system power did not scale linearly because fewer ports were active. Over the first month, optics-related faults dropped: the team recorded fewer “link down” events after standardizing on a single DOM-compatible optics family for each cage type. However, they also saw that thermally marginal cages caused higher error rates on 400G SR8 optics, reinforcing the need for airflow verification.

Common mistakes and troubleshooting tips

1) Port group mismatch

Root cause: 400G optics installed in a cage that requires a different PHY mode or is not licensed for the expected interface type.

Solution: verify switch documentation for supported transceiver form factor and port grouping; confirm software release compatibility before field insertion.

2) MPO polarity inversion on SR8

Root cause: transmit and receive fibers swapped in MPO patching, especially during rework.

Solution: use a polarity checklist, verify with a light source and power meter, and test with a known-good polarity map before blaming the optics.

3) DOM alarm thresholds causing link retries

Root cause: third-party optics report DOM fields differently, triggering vendor-specific “caution” thresholds.

Solution: baseline DOM telemetry from a known-good module, adjust alarms per vendor guidance, and keep optics firmware within the tested matrix.

4) Thermal starvation during high-density 400G

Root cause: higher power per module plus restricted airflow leads to elevated laser bias and transient error bursts.

Solution: measure cage inlet temperatures, ensure fan curves meet vendor airflow recommendations, and consider industrial temp-rated optics only if validated by the platform vendor.

Selection criteria checklist: deciding between 100G vs 400G

- Distance and fiber type: confirm OM4 vs SMF reach requirements and connector constraints (LC vs MPO/MTP).

- Switch compatibility: use the vendor transceiver compatibility list for both 100G and 400G cages.

- DOM and alarm behavior: verify DOM telemetry fields and threshold tuning on your software version.

- Operating temperature and airflow: validate cage inlet temperature margins, not just room temperature.

- Budget and TCO: compare module cost plus expected failure rate and spare stocking requirements.

- Vendor lock-in risk: assess how many models are acceptable per cage and how often firmware updates change compatibility.

Cost and ROI note: realistic pricing and total cost thinking

In typical enterprise and colocation procurement, 100G QSFP28 SR4 modules often price lower than 400G SR8 optics, but 400G can reduce the number of ports and optics needed for the same aggregate throughput. For many teams, the ROI comes from operational simplicity: fewer active links to monitor, smaller patching footprints on spine uplinks, and reduced licensing complexity when supported by the switch.

Real-world TCO depends on failure domains and spares strategy. A conservative approach is to plan spares at least for each optics family and each cage type, and to factor in potential incompatibility costs when switching vendors mid-cycle. OEM modules usually cost more, but their DOM behavior and support path can reduce downtime during incidents.

FAQ: 100G vs 400G optics in buyer terms

When should I choose 100G instead of 400G?

Choose 100G when your distances are within the validated SR/DR reach for your fiber plant and your switch has ample 100G port density. It is also the safer option when you have strict airflow limits or mixed-vendor optics already deployed.

Will 400G SR8 work over OM4 in a typical data center?

Often yes, but only within the vendor and switch validated reach and under correct MPO polarity. Verify the exact optics class and platform support; do not assume all QSFP-DD or OSFP 400G SR8 modules behave identically.

Do I need to worry about IEEE 802.3 compliance?

Yes, but compliance is only the baseline. In practice, interoperability issues arise from PHY implementation details, DOM telemetry, and switch-specific policy checks, so you still need the vendor compatibility matrix and lab validation.

What causes frequent link flaps after installing new 400G optics?

Common causes include port group mismatch, MPO polarity inversion, and thermal starvation. Start by checking switch port state, then confirm fiber polarity with a controlled test, and finally measure cage inlet temperatures during link load.

Are third-party 400G optics safe for production?

They can be, but only if they are explicitly supported by your switch vendor and tested under your software release. Treat DOM telemetry and alarm thresholds as part of the acceptance test, not as an afterthought.

How do I plan spares for 100G vs 400G?

For 100G, spares are usually easier to standardize because QSFP28 families are widely supported. For 400G, spares must match both cage type and optics form factor, and you should keep at