Next-gen edge computing lives or dies on latency, bandwidth headroom, and link reliability at the site. This article helps network architects and field engineers select optical transceivers that meet throughput targets while surviving real-world thermal and power constraints. You will see how to map fiber optics choices to edge workloads like video analytics, industrial telemetry, and distributed inference.

Why edge sites demand optical transceivers, not just “more ports”

At the edge, compute is often colocated with storage and accelerators, while upstream connectivity must scale without saturating aggregation uplinks. Optical transceivers convert electrical SERDES traffic into optical wavelengths, enabling longer reach and higher fan-out than copper. In practice, this reduces the number of intermediate switches and spares power by avoiding long copper runs that can exceed 3 W to 5 W per port in typical 25G/10G copper scenarios. Standards alignment matters: Ethernet PHY behavior follows IEEE 802.3 specifications for link training and optical power classes, as reflected in vendor datasheets and compliance notes. IEEE 802.3

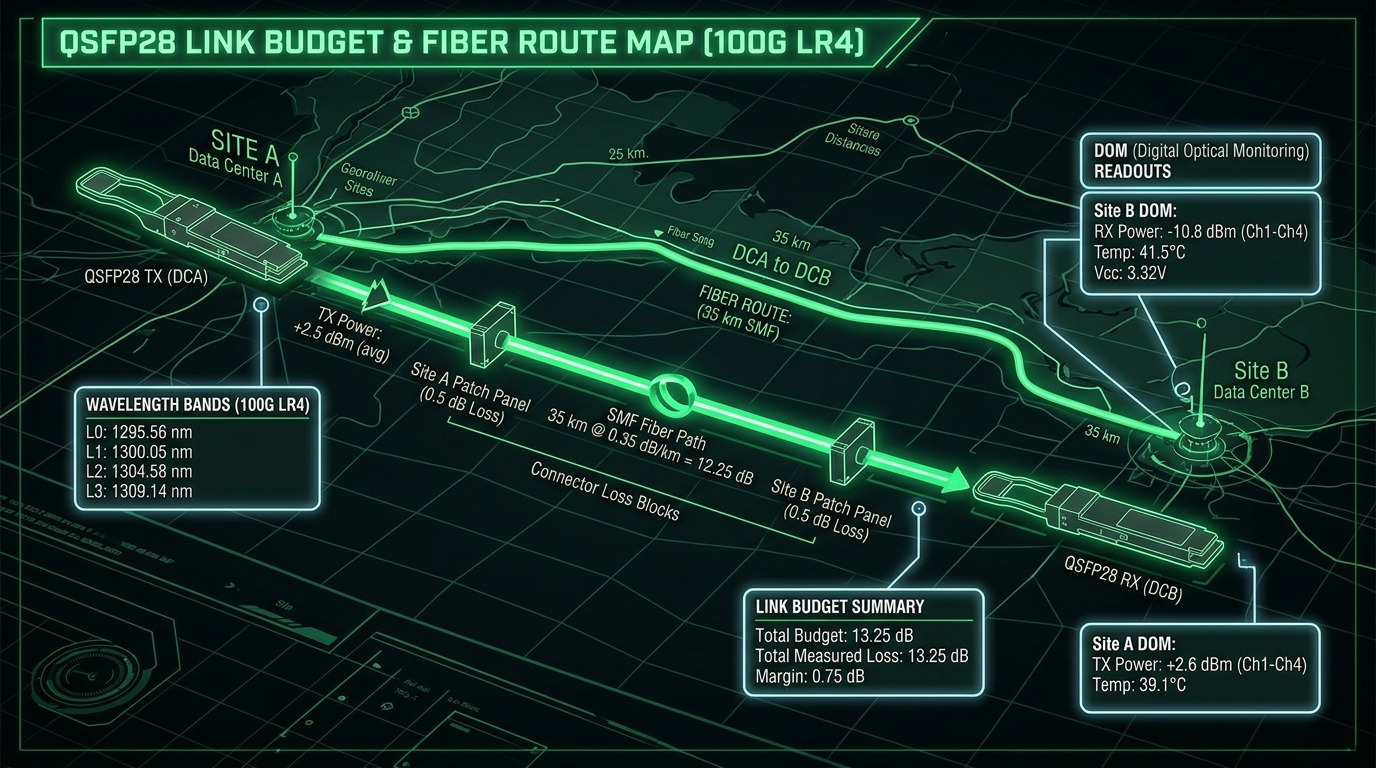

Operationally, edge links must tolerate temperature swings from enclosure heaters, dust ingress, and vibration. Optical modules with documented operating ranges and DOM telemetry (Digital Optical Monitoring) let you detect bias current drift, receive power degradation, and optical faults before the site goes dark. In many deployments, engineers run remote monitoring via SNMP polling or controller telemetry and trigger service tickets when thresholds breach.

Pro Tip: If you cannot graph DOM receive power (Rx power) over time, you are flying blind. Field experience shows that “mysterious” edge link flaps often correlate with slow Rx power decline from connector contamination; DOM trends reveal this weeks before outright link loss.

Transceiver options mapped to next-gen edge computing bandwidth and reach

Edge designs commonly use 10G, 25G, or 100G uplinks depending on workload burstiness and redundancy requirements. The key variables are wavelength (e.g., 850 nm multimode vs 1310/1550 nm single-mode), optical reach, connector type, and power consumption. For dense edge racks, pluggable form factors like SFP+ and SFP28 for 10G/25G, or QSFP28/CFP2 for higher rates, help control footprint and simplify spares logistics. Vendors such as Cisco, Finisar, and FS.com publish module families with explicit compatibility guidance and temperature ratings; always validate against the exact switch vendor and optical cage specification.

| Spec | 10G SFP+ SR (850 nm MMF) | 25G SFP28 SR (850 nm MMF) | 100G QSFP28 LR4 (1310 nm SMF) |

|---|---|---|---|

| Typical data rate | 10.3125 Gb/s | 25.78125 Gb/s | 103.125 Gb/s |

| Wavelength | 850 nm | 850 nm | 1310 nm (4 lanes) |

| Reach class | Up to 300 m (OM3/OM4 varies) | Up to 100 m–400 m (OM4 common) | Up to 10 km typical |

| Connector | LC duplex | LC duplex | LC duplex |

| Power (typ.) | ~1 W to 1.5 W | ~1.5 W to 2.5 W | ~3 W to 5 W |

| Operating temperature | 0°C to 70°C (typical) or extended options | -5°C to 70°C typical; extended available | -5°C to 70°C typical; extended available |

| Common module examples | Cisco SFP-10G-SR, Finisar FTLX8571D3BCL | FS.com SFP-25G-SR (OM4 variants) | Finisar/compatible QSFP28 LR4 families |

For many next-gen edge computing deployments, SR on OM4 multimode is the “rack-to-hub” workhorse when fiber distance is short and installation costs matter. When sites require longer backhaul, LR4 on single-mode becomes the pragmatic choice to avoid expensive active optics and to preserve optical budgets over 5 km to 10 km. ITU-T G.652

Real-world next-gen edge computing deployment scenario

Consider a 3-tier data center-like topology extended to edge: 48-port 25G ToR switches at 12 remote sites connect to an aggregation pair at each site boundary. Each ToR serves industrial gateways and GPU inference nodes, producing bursty east-west traffic for model updates and telemetry. Engineers provision 2 x 25G uplinks per ToR toward a site aggregation switch, while using OM4 SR for intra-building runs of 70 m to 120 m. For the site-to-core backhaul, they use QSFP28 LR4 over single-mode fiber to reach 8 km while maintaining deterministic failover via dual-homed routing and fast convergence.

Operationally, they enable DOM-based monitoring to track receive power and laser bias current, then enforce a maintenance window when Rx power drops toward vendor-specified thresholds. In one rollout, this approach reduced unexpected link downtime by catching contamination-related degradation early; the primary intervention was connector cleaning and patch replacement rather than module swaps.

Selection criteria and decision checklist for optical modules

Engineers typically evaluate the following in order, because edge outages are expensive and spares logistics are constrained:

- Distance and fiber type: OM3/OM4 multimode versus OS2 single-mode; verify actual installed link loss, not just “reach” marketing.

- Required data rate and FEC behavior: confirm whether the switch expects specific Ethernet PHY features; validate with the exact switch model.

- Switch compatibility and optics cage support: test with the vendor’s compatibility matrix; some cages have stricter electrical characteristics.

- DOM and monitoring integration: choose modules that expose Rx power and temperature reliably; ensure your management plane supports threshold alerts.

- Operating temperature and enclosure airflow: edge cabinets often exceed 40°C; select extended-temperature modules when needed.

- Budget and lock-in risk: OEM modules can cost more, but third-party modules may introduce intermittent compatibility issues if timing or calibration differs.

- Connector hygiene and installation practices: plan for LC cleaning tools, inspection microscope checks, and patch cord replacement policy.

Common pitfalls and troubleshooting tips at the edge

Below are frequent failure modes engineers hit when deploying next-gen edge computing optical links:

- Pitfall: “Link up” but high error counters

Root cause: marginal optical budget due to aged patch cords or overlooked insertion loss.

Solution: measure end-to-end loss with a certified meter, then compare against the vendor’s optical budget; replace worst patch cords. - Pitfall: Intermittent flaps after maintenance

Root cause: connector contamination or fiber end-face micro-scratches from reinsertions.

Solution: clean with an approved LC cleaning workflow and inspect with a fiber scope; re-seat carefully and avoid repeated hot swapping beyond supported guidance. - Pitfall: Works on one switch, fails on another

Root cause: cage electrical tolerances and vendor-specific calibration for pluggable optics.

Solution: validate against the specific switch vendor/module compatibility list; run a controlled A/B test with identical fiber and patch cords. - Pitfall: Unexpected thermal throttling or DOM warnings

Root cause: insufficient airflow, blocked vents, or modules operating near upper temperature limits.

Solution: add or recalibrate airflow paths, set cabinet temperature targets, and select extended-temperature optics if the enclosure routinely exceeds thresholds.

Cost and ROI: what to budget for next-gen edge computing optics

Typical street pricing varies by vendor and temperature grade, but rough planning ranges are useful. OEM 10G SR SFP+ optics often land around $50 to $120 each, while 25G SFP28 SR modules may be $80 to $200. QSFP28 LR4 100G optics can be $400 to $900 depending on reach and brand. TCO should include module replacement cycles, field labor, cleaning consumables, and downtime risk. In many edge programs, ROI improves when DOM telemetry and connector hygiene processes prevent repeated truck rolls; the cost of one avoided visit typically outweighs the premium for better monitoring and compatibility.