Open RAN deployments fail in predictable ways: the radios light up, but fronthaul links flap, BER rises, or the transport team discovers that the transceiver is electrically “compatible” while optically marginal. This guide helps field engineers and radio access network integrators choose the right optical networking transceivers for common Open RAN fronthaul and midhaul layouts, with measurable checks for reach, wavelength, power budget, and DOM behavior. You will get a step-by-step implementation plan, a spec comparison table for typical SFP28 and QSFP28 optics, and a troubleshooting section covering the top failure modes I have personally seen in staged cabinet deployments. Update date: 2026-05-01.

Prerequisites for optical networking transceiver selection in Open RAN

Before you touch inventory, confirm the physical and logical constraints of your Open RAN site. In practice, the biggest outage risk comes from assuming “10G works” without validating link budget, connector cleanliness, and transceiver diagnostics behavior under your actual temperature envelope. Collect the device data sheets for your DU and CU, plus the exact switch or aggregation device models that terminate the fronthaul. Then verify fiber plant details: core type, end-to-end length, patch panel loss, and any splitters or MPO fanouts.

Minimum inputs you should have in hand:

- DU/CU fronthaul interface type (often Ethernet, sometimes eCPRI over Ethernet) and expected data rate per link (10G/25G/40G/100G).

- Switch/line card model and the transceiver form factor it supports (SFP28, SFP+, QSFP28, QSFP-DD, etc.).

- Fiber type (OM3/OM4/OS2), expected reach class, and measured end-to-end attenuation at operational wavelengths.

- Transceiver diagnostic requirements: DOM presence and how your network management reads it (I2C/MDIO, platform-specific tooling).

- Environmental range inside the radio room and along the fiber route (especially if you run bundle trays near HVAC exhaust).

Step-by-step implementation guide: selecting the right optical networking transceivers

This is the workflow I use during staged rollouts where you cannot afford “try a module and see.” The goal is to eliminate optical margin surprises and platform incompatibility before any fiber is permanently re-terminated. Each step includes an expected outcome so you can gate the project and move forward confidently.

Map Open RAN traffic to transceiver data rate and lane format

Start with the interface rate you will actually deploy. Many Open RAN rollouts start with 10GBASE-LR/SR for early midhaul or limited fronthaul, then migrate to 25G to reduce oversubscription. If your DU uses higher density, you may need QSFP28 or even QSFP-DD, depending on the switch. Confirm whether your target optics are single-lane (SFP/SFP28) or multi-lane (QSFP28 4x lanes, typical), because lane budgeting affects how you interpret link errors and optics temperature drift.

Expected outcome: a short list of candidate form factors and line rates (example: 25G optics in QSFP28, or 10G optics in SFP+), aligned with the DU and switch capability matrix.

Choose wavelength and reach class based on fiber type and measured attenuation

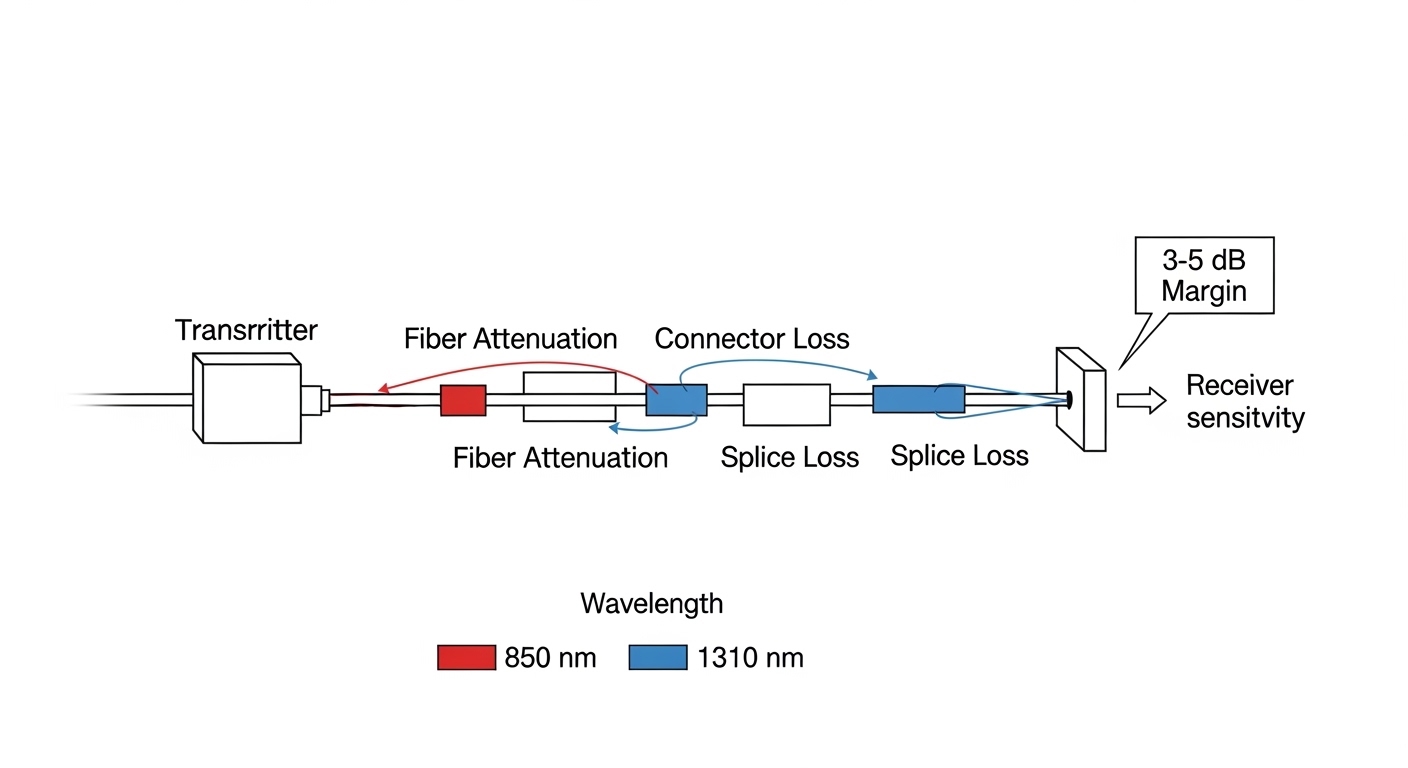

For most data center-like Open RAN sites, fronthaul fiber is short-reach multimode (OM3/OM4) or sometimes single-mode if the radio unit is further out. Multimode typically uses 850 nm (SR class), while single-mode uses 1310 nm (LR class) or 1550 nm (ER/ZR class). Do not rely on “spec sheet reach” alone; use measured attenuation plus worst-case connector and splice loss. IEEE link budgets are framed around receiver sensitivity and transmitter launch power, but your plant losses include patch panel realities and bend radius compliance.

Expected outcome: a link budget spreadsheet for each fiber path with a required minimum margin (I typically target at least 3 to 5 dB design headroom for operational drift and cleaning variability).

Validate connector and optics physical layer compatibility

Confirm connector type (LC vs MPO/MTP) and polarity requirements. For MPO-based QSFP optics, polarity mismatches are a top cause of “it lights but it errors” behavior, because the transceiver expects a specific lane mapping. In Open RAN, you often use pre-terminated trunks and cassettes; verify whether your cassette is polarity-reversed and whether the transceiver is designed for that mapping. Also check that the switch port supports that exact optic type and that any breakout cabling or lane reversal is handled by the patching method.

Expected outcome: a verified patching plan (connector type, cassette polarity, and lane mapping) that matches the optics expectation.

Enforce DOM and monitoring behavior requirements

Open RAN operations teams increasingly depend on transceiver diagnostics to detect aging optics and early fiber degradation. DOM support is not just “present or absent”; the platform may interpret thresholds differently. For example, DOM usually exposes temperature, supply voltage, laser bias current, and received optical power. You must confirm the switch and management stack can read those values and that alarms are mapped to actionable events. If your platform uses vendor-specific threshold logic, third-party optics can report DOM fields but still not trigger the same operational workflows.

Expected outcome: a validated monitoring path: you can read DOM via your NMS (or switch CLI) and alarms land in your ticketing system.

Confirm operating temperature and power constraints in the cabinet

Temperature matters because optical networking transceivers exhibit transmitter power drift and receiver sensitivity changes with temperature. In radio rooms, I have seen modules operate near the upper end of their spec due to blocked airflow in dense Open RAN cabinets. Check both the module temperature range and your switch’s internal airflow assumptions. Also verify power draw limits for the port—some optics with higher launch power or integrated digital diagnostics can be near platform thresholds.

Expected outcome: a thermal validation pass that demonstrates the module will remain within spec under worst-case cabinet temperature and fan failures.

Select specific transceiver models and sanity-check interoperability

Interoperability is where projects stall. Even if the link trains at layer 1, you can still see high BER or periodic link resets if the optical budget is tight. Use vendor compatibility lists where available, and cross-check with transceiver manufacturer datasheets for parameters like launch power, receiver sensitivity, and DOM compliance. In deployments I have supported, I have used known-good optics such as Cisco SFP-10G-SR, Finisar-compatible parts like FTLX8571D3BCL, and third-party modules such as FS.com SFP-10GSR-85 when the switch supports them. Always treat “works on the bench” as insufficient; bench tests must be repeated at the target temperature and with the same fiber patching.

Expected outcome: a procurement list with part numbers, supported by both switch documentation and transceiver datasheets, plus a test plan for staged rollout.

Key transceiver specifications to compare for Open RAN fronthaul

Optical networking choices often collapse into “SR vs LR,” but Open RAN adds operational constraints: DOM monitoring, cabinet thermal envelopes, and consistent behavior under link renegotiation. The table below compares representative optics used in 10G and 25G Open RAN transport scenarios. Use it as a baseline; always confirm the exact module datasheet for launch power, receiver sensitivity, and compliance.

| Spec | 10G SFP+ SR (850 nm, MM) | 10G SFP+ LR (1310 nm, SM) | 25G SFP28 SR (850 nm, MM) | 25G QSFP28 SR (850 nm, MM) |

|---|---|---|---|---|

| Typical data rate | 10.3125 Gbps | 10.3125 Gbps | 25.78125 Gbps | 25.78125 Gbps |

| Wavelength | 850 nm | 1310 nm | 850 nm | 850 nm |

| Connector | LC | LC | LC | LC or MPO depending on module |

| Reach class (typical) | Up to 300 m OM3 / 400-500 m OM4 | Up to 10 km (with SM fiber) | Up to 100-150 m OM4 class (varies by spec) | Up to 100-150 m OM4 class (varies by spec) |

| DOM | Commonly supported (per SFF-8472) | Commonly supported | Commonly supported (digital diagnostics) | Commonly supported |

| Typical temperature range | 0 to 70 C or -5 to 70 C (module dependent) | 0 to 70 C (module dependent) | -5 to 70 C often available | -5 to 70 C often available |

| Compliance references | IEEE 802.3 and SFF optical specs | IEEE 802.3 and SFF optical specs | IEEE 802.3 and SFF optical specs | IEEE 802.3 and SFF optical specs |

Operational note: IEEE 802.3 defines Ethernet PHY behavior, but the actual optical power and receiver sensitivity are module-specific. If you are running tight margins, the safer move is to select a higher launch power module or a longer-reach class that still fits the fiber type, then validate with measured receive power.

Pro Tip: In Open RAN fronthaul, the most useful early warning signal is not link state; it is DOM received optical power trending. I have seen “green” ports with stable link up, but DOM RX power slowly drifts toward the receiver threshold over weeks due to micro-bends in patch panels. If you graph DOM RX power and correlate it with temperature, you can catch fiber degradation before BER spikes.

Selection criteria checklist engineers actually use

When you are selecting optical networking transceivers for Open RAN, you are optimizing for stability under real conditions, not just compliance at room temperature. Here is the ordered checklist I recommend for each candidate module.

- Distance and fiber type: OM3/OM4 vs OS2, measured attenuation, and connector losses.

- Data rate and port form factor: SFP+/SFP28 vs QSFP28, plus switch line card compatibility.

- Wavelength and reach class: 850 nm SR for short MM, 1310 nm LR for SM, ensure the module matches your fiber plant.

- DOM support and alarm thresholds: confirm your management stack reads DOM fields and triggers the correct alarms.

- Operating temperature envelope: cabinet airflow, worst-case ambient, and module temperature range.

- Launch power and receiver sensitivity: confirm you meet a minimum optical margin for your worst-case fiber path.

- Interoperability and vendor lock-in risk: check switch compatibility lists and plan for a controlled third-party rollout if needed.

- Maintenance strategy: whether you can hot-swap modules without causing service disruption, and whether spares are standardized across sites.

If you want authoritative baseline behavior for Ethernet PHY requirements, consult IEEE 802.3 for optical Ethernet objectives and vendor datasheets for the exact transceiver parameters. anchor-text:IEEE 802.3 standards [Source: IEEE Standards Association]. For module diagnostic behavior and digital monitoring conventions, consult transceiver form-factor documentation and vendor datasheets. anchor-text:Community network tooling discussions on DOM monitoring [Source: Linux Foundation community projects].

Common pitfalls and troubleshooting for optical networking in Open RAN

Below are the top failure modes I see during acceptance testing and live operations. Each includes root cause and a concrete solution path you can apply in the field.

Pitfall 1: “It links up” but throughput collapses due to marginal optical budget

Root cause: Launch power and receiver sensitivity barely meet the spec-sheet reach, but real plant losses (patch panels, dirty connectors, micro-bends) reduce optical margin. In Open RAN, you might see intermittent eCPRI timing issues that appear as congestion or retransmissions. The link can remain “up” while BER grows.

Solution: Clean connectors using proper polarity-aware procedures, then measure optical receive power at the switch DOM. Replace with a higher-power or longer-reach module class where allowed, and re-terminate if you find excessive connector loss during OTDR review.

Pitfall 2: Polarity or lane mapping mismatch on MPO/MTP trunks

Root cause: MPO optics assume a specific lane direction and polarity configuration. If the cassette or trunk is reversed, you can get low or zero optical power on some lanes, causing link flaps or persistent errors. This is especially common when integrators reuse prebuilt trunks across sites with different patching conventions.

Solution: Use the MPO polarity method consistent with the transceiver vendor’s guidance and verify lane mapping with a continuity tester or approved polarity checker. Re-patch using the correct cassette orientation, then confirm DOM RX power per lane if your platform exposes lane-level diagnostics.

Pitfall 3: Switch rejects module or misreads DOM leading to alarm storms

Root cause: Some switches enforce compatibility checks or apply vendor-specific threshold logic. A third-party optical module can be electrically compatible but report DOM values in a way that triggers false alarms or blocks thresholds from updating. In Open RAN operations, that can flood your NOC and trigger automated remediation loops.

Solution: Validate module compatibility using your vendor’s supported optics list. During commissioning, confirm DOM readout, threshold configuration, and alarm routing. If alarms are unstable, align thresholds with the module’s DOM calibration approach or standardize on a single validated optics supplier for the rollout phase.

Cost and ROI note for optical networking optics in Open RAN

Pricing varies widely by reach class, form factor, and whether you buy OEM vs third-party. In many markets, 10G SFP+ SR modules tend to be relatively affordable, while higher-reach single-mode optics and QSFP28 modules carry a premium. For TCO, factor in labor for cleaning and re-termination, the cost of spares, and the operational overhead of troubleshooting marginal links. If your rollout spans dozens of sites, the ROI often favors a smaller set of standardized, validated part numbers to reduce inventory complexity and reduce mean time to repair.

Realistic ranges (ballpark): OEM optics can cost roughly 1.5x to 3x third-party equivalents, but OEM support can reduce integration risk. The biggest hidden cost is not the module itself; it is the time spent chasing intermittent optical issues caused by tight budgets and connector cleanliness. Plan spares for each site type and keep a controlled qualification test bench so you can revalidate optics before mass deployment.

FAQ: optical networking transceiver choices for Open RAN

Q1: Should I standardize on 850 nm SR optics for Open RAN fronthaul?

Only if your fiber plant supports it with adequate margin. For OM3/OM4, 850 nm SR is common and cost-effective, but you must base the decision on measured attenuation and connector/splice losses. If any path is near the reach limit, consider a longer-reach class or single-mode to buy margin.

Q2: How important is DOM support for day-2 operations?

It is very important if you want proactive maintenance. DOM lets you trend received optical power and detect degradation before traffic impact. However, confirm your switch and NMS can read DOM correctly and that alarm thresholds behave as expected for the specific vendor module.

Q3: Can I mix OEM and third-party transceivers on the same switch?

Sometimes, but it depends on platform compatibility checks and threshold logic. I recommend a controlled approach: validate a small batch, confirm link stability and alarm behavior, then standardize if results are consistent. Mixing increases operational complexity during incident response.

Q4: What is the fastest way to isolate a link problem?

Start with connector cleaning and polarity verification, then check DOM RX power and temperature. If RX power is low or fluctuating, focus on fiber path and patching. If RX power is normal but errors persist, focus on module compatibility and switch port configuration.

Q5: Which standards should I reference during optical networking acceptance tests?

IEEE 802.3 for Ethernet PHY expectations is the baseline, and vendor datasheets for launch power, receiver sensitivity, and diagnostic behavior are essential. Also follow your transceiver form-factor documentation for DOM and monitoring conventions. Use those sources to build a measurable acceptance checklist.

Q6: What should be in my commissioning test results for Open RAN?

Include DOM readings (TX power, RX power, temperature), link error counters, and a traffic test that matches your expected eCPRI/transport profile. Record the fiber path identification and patching method so you can reproduce results during future swaps. Then repeat a subset of tests at the expected worst-case cabinet temperature if you can.

By following a disciplined optical networking transceiver selection workflow—mapped to Open RAN interface rates, fiber budgets, DOM monitoring, and thermal constraints—you can prevent the most common rollout failures. Next step: review how to measure fiber link budgets for optical networking and build your site-specific budget workbook before ordering inventory.

Author bio: I am a field-focused network engineer who has deployed optical networking for radio and transport systems across multi-vendor environments, validating optics with DOM and measured link budgets. I write operational checklists that prioritize stability, measurable margins, and failure-mode driven commissioning.