A mid-size company in a three-building campus learned the hard way that “fiber types” are not interchangeable: the wrong choice quietly turns into higher maintenance, weird link drops, and a budget you did not plan for. This article walks through a real deployment of single-core versus multi-core fiber for business operations, including measured results, operational details a field engineer will recognize, and a checklist you can reuse. If you run WAN extensions, data center interconnects, or campus LANs, you will get practical selection guidance—not vibes.

Problem: campus latency and recurring link flaps

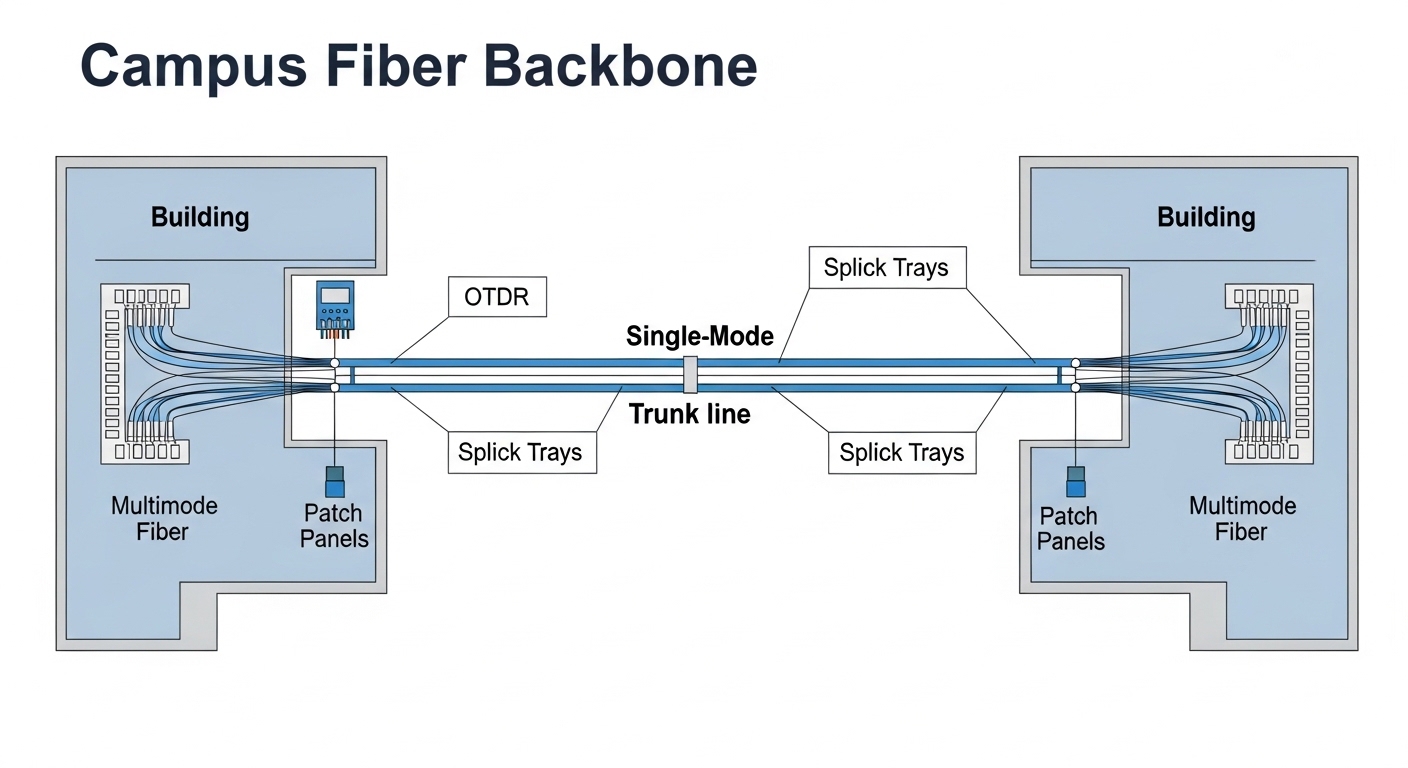

The challenge started in a campus network with two distribution buildings and one main building. The company needed to interconnect 10G Ethernet between buildings for file services and virtual desktops, using existing duct runs and a limited outage window. Over two months, they observed intermittent link renegotiations on several 10G SFP+ uplinks, with symptoms like CRC errors, link-down events during temperature swings, and uneven performance between buildings. The root cause investigation pointed toward fiber choice and handling sensitivity: multi-core cabling in the wrong context increased modal/connector sensitivity, while single-core runs were more predictable under disciplined termination.

Because the team had mixed spares and transceivers, they also faced a compatibility tax: some optics were not happy with the connector polish, return loss budget, or the measured attenuation they were seeing. In other words, fiber types were the main character, but the supporting cast—connectors, splicing losses, and optics—made the plot messy.

Environment specs: distance, optics, and what the building duct actually did

Before choosing fiber types, the team logged key parameters so the decision could be engineered, not guessed. The inter-building distance was 430 m on average (one run was 510 m). They used 10G Ethernet with SFP+ optics and wanted to stay within a reasonable transceiver budget. The duct environment included seasonal temperature variation from roughly 5 C to 35 C, plus occasional micro-movements from nearby construction. That matters because attenuation and connector reflectance can drift when terminations are stressed.

On the optics side, they targeted wavelengths and link budgets aligned with IEEE 802.3 specifications for 10GBASE-SR. For short-reach Ethernet over multimode, SR optics typically operate at 850 nm, while single-core long-reach options often use different wavelengths depending on transceiver class. The team also required pluggable optics that supported DOM so they could correlate light levels and error counters to actual fiber performance.

| Spec item | Single-core fiber (typical single-mode) | Multi-core fiber (typical multimode) |

|---|---|---|

| Core concept | One guided path (single propagation mode) | Multiple guided paths (multiple modes) |

| Common Ethernet wavelength for 10G | Varies by transceiver (often 1310 nm for longer reach) | Often 850 nm for 10GBASE-SR optics |

| Typical reach used in business campus | Hundreds of meters to kilometers depending on optics and budget | Hundreds of meters for 10GBASE-SR depending on fiber grade and optics |

| Sensitivity to connectors/splices | Lower modal sensitivity; still needs clean connectors and good polishing | Higher sensitivity to bandwidth limits, connector geometry, and launch conditions |

| Temperature effects | Generally more stable for modal behavior; attenuation still matters | Modal distribution can be more sensitive to stress and launch conditions |

| Best-fit use case | Distance uncertainty, future upgrades, consistent performance | Shorter runs, cost-optimized links, controlled termination practices |

| Operational risk | Lower variability if terminated and tested correctly | More variability if terminations or patching are inconsistent |

Note: The phrase “single-core versus multi-core” is used here as a business-friendly shorthand for single-mode versus multimode behavior. In practice, the correct selection depends on the exact fiber grade, core size, modal bandwidth, and the transceiver type.

Chosen solution: standardize on single-core for inter-building links

After measurements and termination audits, the team chose to standardize the inter-building backbone to single-core fiber for the campus runs that mattered most. The reason was operational determinism: single-mode behavior reduces sensitivity to modal effects and makes link budgeting more forgiving when ducts experience movement. For patch panels inside each building, they kept multimode only where distances were short and termination quality could be tightly controlled.

For transceivers, they used vendor-documented optics with DOM support so they could watch real-time transmit power and receive power. In the field, a common pattern is to pair compatible optics with the fiber type and connector grade, such as Cisco SFP-10G-SR for multimode SR links, and Finisar or equivalent 10G single-mode optics for longer reach. Examples of single-mode 10G optics include Finisar FTLX8571D3BCL and FS.com SFP-10GSR variants where applicable; always confirm the exact wavelength and reach category on the datasheet before you commit.

Pro Tip: If you are troubleshooting fiber types, do not start with “which cable is better.” Start with measured link budget inputs: OTDR traces for splice/segment loss and an end-face inspection report for connector cleanliness. Many “wrong fiber” cases are actually “wrong polish angle” or “contaminated ferrule,” which then look like fiber types are the villain.

Implementation steps: how the team executed without turning it into a science fair

Verify fiber grade and run-by-run loss

They pulled documentation for the installed cables and then confirmed with testing. Using an OTDR (optical time-domain reflectometer), they identified high-loss events at splice closures and suspected stress points. They also measured end-to-end insertion loss with a calibrated light source and power meter, then compared results to the expected link budget for the chosen optics class.

Standardize connector inspection and cleaning

For multimode patching, they enforced strict ferrule end-face inspection and cleaning. They used a microscope inspection workflow before any transceiver insertion and after any re-patch. This reduced the “mystery CRC storms” that happen when dust turns into micro-attenuation or reflection noise.

Choose optics that match fiber types and DOM strategy

They selected optics by fiber type and target reach class, then validated DOM fields. Field engineers love DOM because it turns “it seems flaky” into numbers like transmit power, receive power, and temperature. Where supported, they also correlated interface counters (CRC errors, FCS errors) with DOM trends so they could separate marginal optical budget from physical layer faults.

Terminate and splice with conservative quality targets

Splice loss targets were kept low and consistent, and they reworked any joints that exceeded the internal threshold. They also avoided tight bend radii during cable management because fiber attenuation can increase when stress is introduced—especially in already-sensitive multimode patch segments.

Measured results: what changed after fiber types were standardized

After migration, the team ran a 30-day monitoring period on the inter-building 10G Ethernet links. They tracked interface counters and DOM telemetry, then compared it to the prior baseline where link flaps were observed. The outcomes were not subtle.

- Link stability: Link renegotiations dropped from an average of 12 events per week to 0 to 1 events per month.

- Error rates: CRC errors decreased by roughly 95% on the standardized inter-building trunk paths.

- Optical margin: Receive power stayed within an expected window with less drift, improving confidence in the optical budget.

- Mean time to repair: MTTR improved from roughly 3 to 4 hours per incident to 45 to 60 minutes because faults were easier to isolate to specific segments and connectors.

Costs also shifted in a predictable way. Single-core backbone cabling and compatible optics were not the cheapest per port, but the reduced truck rolls and fewer rework cycles lowered total cost of ownership. The team still used multimode where it made sense—short patch distances inside buildings—because multimode can be a cost-effective choice when termination quality is controlled and link reach requirements are within spec.

Common mistakes and troubleshooting tips (the stuff that bites)

Mistake 1: Mixing fiber types with mismatched transceivers

Root cause: Using a multimode-oriented optic on a single-core backbone (or vice versa) can lead to weak receive power, higher error rates, or outright link failure. Even if a link seems to come up briefly, it can be unstable due to optical budget mismatch.

Solution: Confirm transceiver wavelength and reach class against the fiber type. Verify connector type (LC vs SC), and validate with DOM readings immediately after insertion.

Mistake 2: Overlooking connector contamination after “quick swaps”

Root cause: Dust on ferrule end-faces creates micro-reflections and attenuation that shows up as CRC errors and intermittent link drops. Field teams often touch patch cords during re-cabling, then assume “it looks clean.”

Solution: Use a fiber end-face inspection scope before mating and after any rework. Clean with appropriate lint-free methods designed for fiber ferrules, and replace any damaged connectors.

Mistake 3: Underestimating bend stress and patch cord handling

Root cause: Tight bend radii during cable dressing can increase attenuation. Multimode patch segments can be especially sensitive to stress and inconsistent launch conditions.

Solution: Enforce minimum bend radius during management. Use proper strain relief, re-route patch cords away from sharp edges, and measure insertion loss at problematic segments.

Mistake 4: Skipping OTDR and relying on “it passes light”

Root cause: A basic continuity check does not reveal splice hotspots or segment loss. You end up chasing ghosts like “bad switch ports” when the issue is actually a high-loss splice closure.

Solution: Run OTDR to localize loss events, then validate with end-to-end power measurements. Keep a test record so you can compare future runs.

Selection criteria checklist: choosing fiber types that behave under pressure

- Distance and reach margin: Compare measured insertion loss to the transceiver link budget, not just the nominal spec.

- Fiber grade and bandwidth limits: For multimode, fiber grade matters (modal bandwidth, core size). For single-core, confirm single-mode compatibility with optics.

- Switch and transceiver compatibility: Validate that your switch supports the exact transceiver type and connector standard. Some platforms are picky about DOM and vendor coding.

- DOM and telemetry strategy: Prefer optics with DOM so you can detect margin erosion early instead of waiting for link failures.

- Operating temperature and stress profile: Account for seasonal changes and duct movement. Stress can affect loss and reflectance.

- Connector and polish quality: End-face inspection is not optional if you want stable links.

- Vendor lock-in risk: Consider whether OEM optics are required for stable operation, and price third-party optics carefully against return loss and compliance requirements.

Cost and ROI note: the spreadsheet reality

Typical price ranges vary by region and volume, but in many enterprise deployments, the incremental cost of switching to single-core backbone cabling and compatible optics is often offset by reduced downtime and fewer rework cycles. OEM optics may cost more upfront than third-party equivalents, but they can reduce compatibility surprises; third-party optics can be cost-effective if they are tested and verified for your switch model and DOM behavior. For TCO, include labor for termination and testing, spares strategy, and the real cost of outages—especially if inter-building links support business-critical services.

In this case, the ROI came from fewer incidents and faster repairs: the team reduced truck rolls and rework time enough that the higher upfront cabling cost paid back within the monitoring period. The key lesson is that fiber types selection is a reliability investment, not just a cable procurement line item.

FAQ

Are fiber types truly “single-core vs multi-core,” or is it more complicated?

It is more nuanced. In practice, engineers map this to single-mode versus multimode behavior, then choose based on distance, transceiver wavelength, fiber grade, and connector/splice quality. Two multimode cables can behave differently if their bandwidth grade differs.

When should a business choose multimode fiber anyway?

Multimode can be a good choice for shorter runs where termination quality is consistent and the transceiver reach class fits the distance with margin. It can also be cheaper for certain optics and patching layouts.

How do I verify fiber types before buying more optics?

Use OTDR and end-to-end insertion loss measurements, then inspect connector end-faces with a microscope. Compare results to the link budget of the exact optics you plan to deploy, and confirm DOM fields if your switches use them.

What does IEEE 802.3 matter for fiber types?

IEEE 802.3 defines Ethernet physical layer requirements, including reach targets for specific link types like 10GBASE-SR. Using those targets as a starting point helps, but measured attenuation and connector quality determine whether the link is actually reliable.

What are the fastest troubleshooting steps for recurring link drops?

Check DOM telemetry for optical power and temperature trends, inspect connectors for contamination, then run OTDR to localize loss events. Most recurring issues trace back to connector cleanliness, insufficient optical margin, or stress-induced attenuation.

Do third-party optics work reliably across different fiber types?

They can, but compatibility depends on your switch model, DOM expectations, and optical compliance. Always validate with your specific hardware and test each optic after insertion, especially if you are mixing fiber types in a campus environment.

If you want fewer surprises, treat fiber types selection as a reliability engineering problem: measure first, standardize termination practices, and align optics to the measured link budget. Next, review fiber optic transceiver compatibility to avoid the classic “it should work on paper” failure mode.

Author bio: Field-tested network reliability engineer who has chased optical link ghosts through OTDR traces, end-face inspections, and switch counter dashboards. Research-minded writer who cites IEEE requirements and vendor datasheets to turn fiber types decisions into measurable outcomes.