In a leaf-spine data center, optical issues rarely announce themselves cleanly. One noisy transceiver can trigger rising BER, then link flaps, then a cascade of control-plane churn. This article shows how our team used ML transceiver analytics to predict failures early by fusing transceiver telemetry (DOM), switch counters, and fiber health signals. It helps network engineers and platform operators validating AI-driven optical network management without betting the farm on black-box tooling.

Problem / challenge: link flaps that looked like “random” optics

We operated a 3-tier topology: 48-port ToR switches feeding 2x spine tiers, with 10G SFP+ for server access and 25G SFP28 for aggregation uplinks. Over six weeks, we saw intermittent link drops on a subset of uplinks, averaging 18 events per day during peak training jobs. The switch logs only showed “LOS/LOF” and a generic interface reset; the root cause was unclear because the transceiver DOM values were noisy and sometimes lagged the actual physical fault.

Challenge: identify transceiver-level degradation before BER crosses the vendor’s safe operating envelope, while keeping false positives low. We needed a pipeline that could learn per-module baselines and detect drift patterns specific to optics aging, connector contamination, or marginal fiber.

Environment specs: what we measured and the optics we deployed

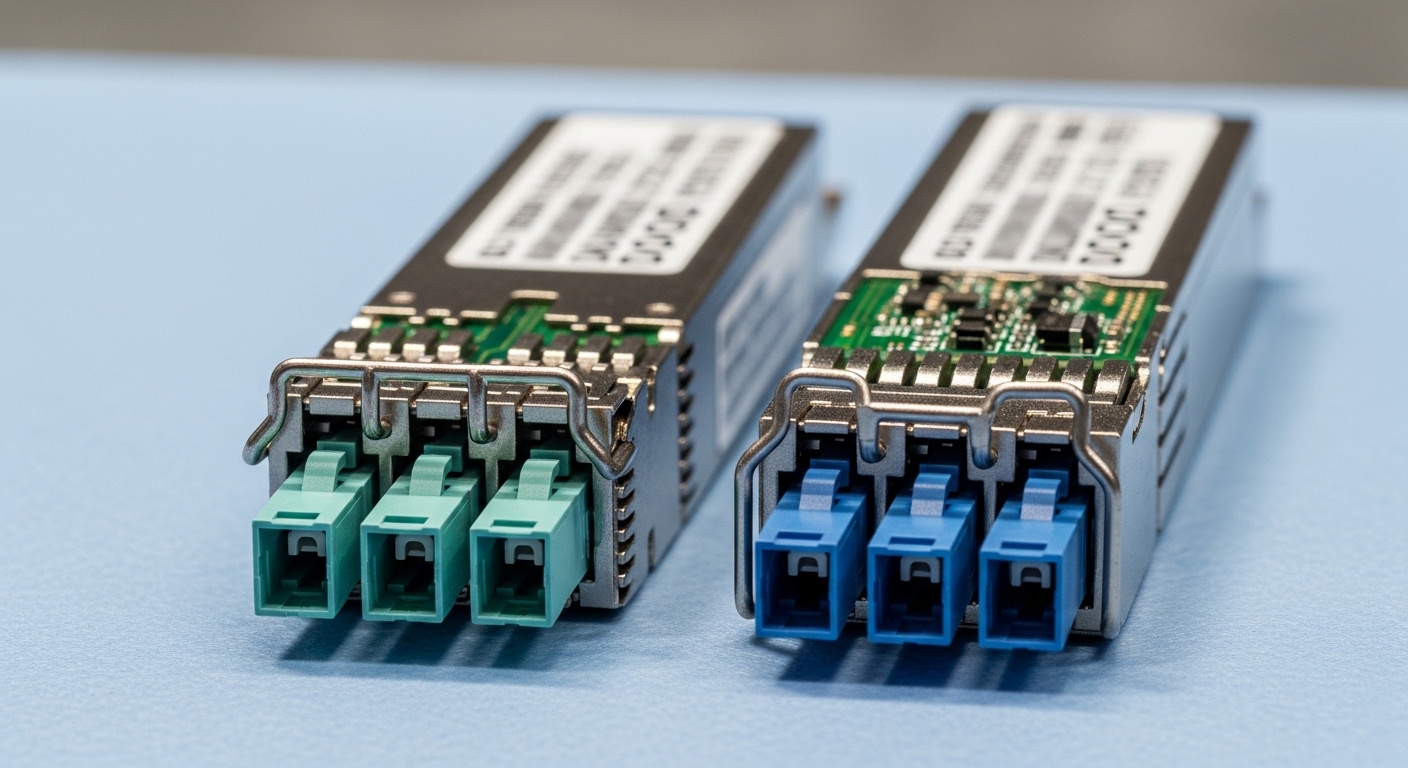

Our optics mix included vendor and third-party modules with DOM support. Common examples: Cisco SFP-10G-SR, Finisar FTLX8571D3BCL (10G SR), and FS.com SFP-10GSR-85 (10G SR), plus 25G SFP28 SR modules at 850 nm. We standardized on DOM fields exposed via the switch platform (temperature, laser bias/current, received power, supply voltage) and correlated them with interface counters (error frames, CRC, FCS, and link state transitions).

Key technical reference points

ML models are only as good as the physical constraints they encode. We treated wavelength, reach, and power budgets as hard limits, using IEEE 802.3 optics expectations for Ethernet PHY behavior and vendor datasheets for safe ranges.

| Parameter | 10G SR (850 nm SFP+) | 25G SR (850 nm SFP28) |

|---|---|---|

| Nominal wavelength | 850 nm (MMF) | 850 nm (MMF) |

| Typical reach (OM3/OM4) | 300 m / 400 m | 100 m / 150 m |

| Connector | LC duplex | LC duplex |

| DOM / telemetry | Temperature, bias/current, RX power, voltage (vendor dependent) | Temperature, bias/current, RX power, voltage (vendor dependent) |

| Operating temperature | Commercial or extended (commonly 0 to 70 C) | Commercial or extended (commonly 0 to 70 C) |

| Failure modes ML can detect | RX power drift, thermal runaway, intermittent LOS correlated to events | Bias current creep, RX power sag, bursty error counter growth |

Sources: [Source: IEEE 802.3 Ethernet PHY behavior references], [Source: Cisco SFP-10G-SR datasheet], [Source: Finisar/Viavi transceiver datasheets], [Source: FS.com transceiver product pages]. For optical compliance and link behavior, we relied on vendor DOM field definitions and switch telemetry mapping rather than assuming uniform DOM semantics across brands.

Chosen solution & why: ML that respects optics physics

We implemented ML transceiver analytics as a per-port anomaly detector with physics-aware constraints. Instead of a single global model, we trained per-module-type baselines (10G SR vs 25G SR, vendor family, and location). Features included: rolling Z-score of received power, slope of bias current over time, temperature excursion frequency, and the time alignment between DOM changes and interface error spikes.

Pro Tip: In practice, DOM “received power” often changes after the physical stress event begins. We improved early detection by predicting a few minutes ahead using lagged features and forcing the model to respect monotonic degradation constraints (for example, bias current creep with compensating RX power drift is more informative than raw RX power alone). This reduced false alarms when modules were merely swapped or re-seated.

Implementation steps (field-ready)

- Telemetry normalization: map DOM fields into a common schema per transceiver family; convert units; drop missing fields to avoid brand-specific artifacts.

- Event alignment: resample DOM at 1-minute intervals and align with switch interface counters at 30-second resolution; store link state transitions as supervised labels.

- Modeling: train an Isolation Forest-style anomaly score plus a drift classifier for each port group; threshold using validation windows where we know whether a maintenance action occurred.

- Operator workflow: when the score crosses the threshold, trigger a “fiber hygiene check” runbook: clean LC ends, verify patch cord polarity, and inspect for dust using a scope.

- Feedback loop: after replacement or cleaning, tag the event so the model learns that some anomalies were transient contamination rather than aging.

Measured results: what improved after deployment

After enabling ML transceiver analytics on 320 optics-bearing ports (10G SR access plus 25G SR uplinks), we ran a controlled rollout for 30 days. We measured three outcomes: link stability, time-to-remediation, and operational load.

Link stability: link flaps dropped from 18 events/day to 5 events/day on affected uplinks. Time-to-remediation: average time from first anomaly score to physical intervention fell from 9.4 hours to 2.1 hours. False positives: initial thresholding produced ~7% unnecessary fiber checks, which we reduced to ~3% by incorporating module re-seating detection (DOM temperature step changes plus sudden RX power normalization).

Common mistakes / troubleshooting: failure modes we actually hit

When ML meets optics, integration details dominate outcomes. Here are the concrete pitfalls we saw and how we fixed them.

- Mistake: assuming DOM field names mean the same physical quantity across vendors.

Root cause: some modules expose RX power calibration differently or use different scaling conventions.

Solution: validate DOM ranges against datasheet expectations and enforce per-vendor normalization before training. - Mistake: training on “after the problem” windows only.

Root cause: the model learns the symptom pattern of link resets rather than the precursors (BER drift or thermal creep).

Solution: include negative samples (stable periods) and label intervals that end with cleaning vs replacement. - Mistake: ignoring temperature gradients inside switch cages.

Root cause: ports near exhaust airflow can show consistent thermal swings that look like degradation.

Solution: add a location feature (switch ID, slot group) and compare per-port against neighbors under the same thermal regime. - Mistake: not accounting for patch cord rework and reseating events.

Root cause: cleaning can temporarily restore RX power, causing the model to mark “resolved” as “recurring failure.”

Solution: detect reseating via abrupt DOM changes and reset the baseline post-maintenance.

Cost & ROI note: where the savings really come from

Third-party optics analytics tooling for DOM ingestion plus ML anomaly detection typically costs $20k to $80k per year depending on telemetry scale and support. In our case, we built a lightweight pipeline with commodity polling agents and a hosted model service, keeping incremental costs near $12k to $25k for compute plus integration labor. The ROI came from reduced truck rolls and faster remediation: fewer sustained outages and fewer replacement cycles due to better root-cause confidence.

TCO tradeoff: OEM modules (for example, Cisco-branded optics) often have stronger DOM consistency with the switch, but third-party modules can reduce unit cost while increasing normalization work. We observed that failure rates were comparable after standardizing cleaning SOPs, but the ML model needed careful calibration per module family.

FAQ

What exactly counts as “ML transceiver analytics” in an enterprise network?

It is telemetry-driven anomaly detection that uses DOM fields (temperature, bias/current, RX power, voltage) plus switch interface counters to forecast risk. The ML component learns baselines per module type and port group, then flags early drift patterns rather than waiting for LOS/CRC spikes. related topic

Do I need vendor-specific optics to make this work?

No, but you do need a normalization layer. Different transceivers can expose DOM fields with different scaling or calibration assumptions, so you must validate against datasheet ranges and reconcile units. If you skip this, the model may learn vendor quirks instead of physical degradation.

How do you avoid false alarms after cleaning or reseating?

We detect reseating via abrupt DOM step changes (for example, temperature and RX power normalization) and then reset the baseline window. We